SPEED task details

| EGI Activity groups | Special Interest groups | Policy groups | Virtual teams | Distributed Competence Centres |

| EGI Virtual teams: | Main • | Active Projects • | Closed Projects • | Guidelines |

Motivation and task details of the SPEED project

Almost every step in automatic speech recognition (ASR) and text-to-speech (TTS) training and testing could benefit from data parallelism and splitting a computing task among many execution nodes of a computer cluster. Feature extraction, initiation and forward-backward training (segmental K-means & Expectation-Maximization algorithm) of the acoustic models, re-alignment of the speech data and alignment for clustering, tree-based clustering, linear transform estimations for adaptation of acoustic models, belong to the tasks in which huge amount of data rapidly causes the ASR development to be extremely time expensive. Data parallelism may be used to shorten this development and is applicable in different contexts such as in per-subset training, Hidden Markov Models' state, stream, speaker, and even sentence-level contexts. The training process has a lot of input parameters, e.g. the type of features, number of forward-backward iterations, where to apply re-alignment of the speech data and when to apply tree clustering, what type of linear transform to choose, and so far. The set of input parameters depends on the speech data available, the domain (the type of data such as broadband speech or telephone speech). Some steps can be language dependent as well. Finally, validation and evaluation criteria increase the number of variable input parameters, which leads to the need for more computing power in the optimization process.

Diagnosis of the ASR and TTS technologies is a process of localization of sources of errors done by the systems. Often it is computationally intractable as very detailed information has to be dumped during speech recognition/synthesis process (such as fine grained word lattices, time evolved internal system parameters and so far) for huge testing sets. Including the diagnosis in the training process and subsequent automatic optimization of the training based on partial diagnosis results has a potential of improving the effectiveness and quality of ASR and TTS systems. For example, an early automatic diagnosis of an outlier speaker (the speaker with atypical or badly transcribed speech), and disregarding such speaker in training would improve the final system output.

The aim of the Virtual Team Project is to optimize the training and testing processes of the speech technologies to find clusters of the input parameters vector space that increase the system performance for the different training/testing tasks. The multi-language virtual team could test the impact of language on the optimization processes. A computer cluster can help in identifying the impact of run-time diagnosis on the optimization process, as well as facilitate the search for input parameters subsets that increase the diagnosis performance.

The holistic optimization

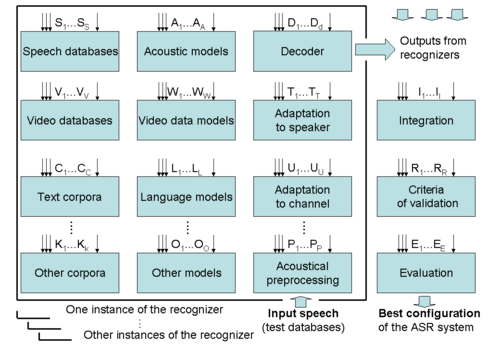

The ASR system consists of many modules. Features of the architecture of such a module together with many values/settings of its control characteristics can be taken as components of a “settings-vector”, or input vector of the particular module. In an ideal case the GRID should enable optimization of the settings of all the input vector values of the system, giving the globally optimized setting of an ASR system for a given purpose.