MAN10

| Main | EGI.eu operations services | Support | Documentation | Tools | Activities | Performance | Technology | Catch-all Services | Resource Allocation | Security |

| Documentation menu: | Home • | Manuals • | Procedures • | Training • | Other • | Contact ► | For: | VO managers • | Administrators |

| Title | Cloud Resource Provider Installation Manual |

| Document link | https://wiki.egi.eu/wiki/MAN10 |

| Last modified | 11 March 2016 |

| Policy Group Acronym | OMB |

| Policy Group Name | Operations Management Board |

| Contact Group | operations-support@mailman.egi.eu |

| Document Status | DRAFT |

| Approved Date | |

| Procedure Statement | This manual provides information on how to set up a Cloud Resource Provider in the EGI infrastructure. |

| Owner | Owner of procedure |

Common prerequirements

General minimal requirements are:

- Very minimal hardware is required to join. Hardware requirements depend on:

- the cloud stack you use

- the amount of resources you want to make available

- the number of users/use cases you want to support

- Servers need to authenticate each other in the EGI Federated Cloud context; this is fulfilled using X.509 certificates, so a Resource Provider should be able to provide server certificates for some services.

- User and research communities are called Virtual Organisations (VO). At least support for 3 VOs is needed to join as a Resource Provider:

- ops and dteam, used for operational purposes as per RP OLA

- fedcloud.egi.eu: this VO provides resources for application prototyping and validation

- The operating systems supported by the EGI Federated Cloud Management Framework are:

- Scientific Linux 6, CentOS7 (and in general RHEL-compatible)

- Ubuntu (and in general Debian-based)

Integrating OpenStack

Integration with FedCloud requires a working OpenStack installation as a prerequirement (see http://docs.openstack.org/ for details). There are packages ready to use for most distributions (check for example RDO for RedHat based distributions).

OpenStack integration with FedCloud is known to work with the following versions of OpenStack:

- Havana (EOL by OpenStack, should not be used in production)

- Icehouse (EOL by OpenStack)

- Juno (EOL by OpenStack)

- Kilo (Security-supported, EOL: 2016-05-02)

See http://releases.openstack.org/ for more details on the OpenStack releases.

Which components must be installed and configured depends on the services the RP wants to provide.

- Keystone must be always available

- If providing VM Management features (OCCI access or OpenStack access), then Nova, Cinder and Glance must be available; also Neutron is needed, but nova-network can also be used for legacy installations (see here how to configure per-tenant routers with private networks).

- If providing CDMI access (Object storage), then Swift is needed

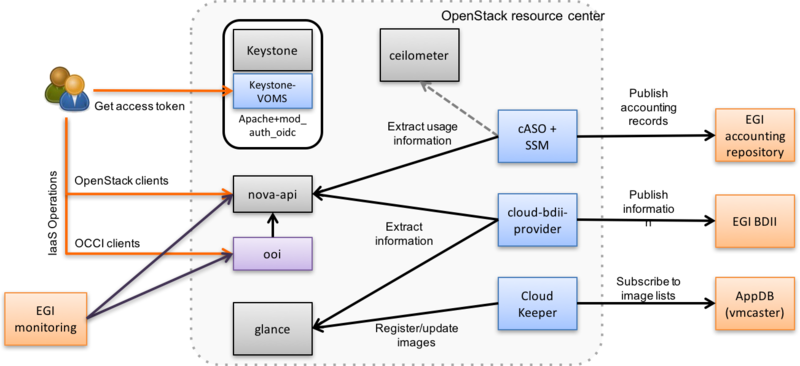

As you can see from the schema above, the integration is performed installing some EGI extensions on top of the OpenStack components.

- Keystone-VOMS Authorization plugin allow users with a valid VOMS proxy to access the OpenStack deployment

- OpenStack OCCI Interface (ooi) translates between OpenStack API and OCCI

- cASO collects accounting data from OpenStack

- SSM sends the records extracted by cASO to the central accounting database on the EGI Accounting service (APEL)

- BDII cloud provider registers the RP configuration and description through the EGI Information System to facilitate service discovery

- vmcatcher checks the EGI App DB for new or updated images that can be provided by the RP to the user communities (VO) supported

- glancepush pushes updated subscribed images from vmcatcher to Glance, using Openstack Python API

- OpenStack handler for vmcatcher, used in conjunction with vmcatcher, will create all necessary files for the correct execution glancepush. Once done, glancepush will use this files to register the downloaded images in Openstack.

EGI User Management/AAI (Keystone-VOMS)

Every FedCloud site must support authentication of users with X.509 certificates with VOMS extensions. The Keystone-VOMS extension enables this kind of authentication on Keystone.

Documentation on the installation is available on https://keystone-voms.readthedocs.org/

Notes:

- Take into account that using keystone-voms plugin will enforce the use of https for your Keystone service, you will need to update your URLs at the Keystone catalog and in the configuration of your services:

- You will probably need to include your CA to your system's CA bundle to avoid certificate validation issues:

/etc/ssl/certs/ca-certificates.crtfrom theca-certificatespackage on Debian/Ubuntu systems or/etc/pki/tls/certs/ca-bundle.crtfrom theca-certificateson RH and derived systems. Check the packages documentation to add a new CA to those bundles. - replace http with https in

auth_[protocol|uri|url]andauth_[host|uri|url]in the nova, cinder, glance and neutron config files (/etc/nova/nova.conf,/etc/nova/api-paste.ini,/etc/neutron/neutron.conf,/etc/neutron/api-paste.ini,/etc/neutron/metadata_agent.ini,/etc/cinder/cinder.conf,/etc/cinder/api-paste.ini,/etc/glance/glance-api.conf,/etc/glance/glance-registry.conf,/etc/glance/glance-cache.conf) and any other service that needs to check keystone tokens. - You can update the URLs of the services directly in the database:

- You will probably need to include your CA to your system's CA bundle to avoid certificate validation issues:

mysql> use keystone; mysql> update endpoint set url="https://<keystone-host>:5000/v2.0" where url="http://<keystone-host>:5000/v2.0"; mysql> update endpoint set url="https://<keystone-host>:35357/v2.0" where url="http://<keystone-host>:35357/v2.0";

- Support for EGI VOs: VOMS configuration, you should configure fedcloud.egi.eu, dteam and ops VOs.

- VOMS-Keystone configuration: most sites should enable the

autocreate_usersoption in the[voms]section of Keystone-VOMS configuration. This will enable that new users are automatically created in your local keystone the first time they login into your site.

EGI Virtual Machine Management Interface (ooi/OCCI)

OCCI is the EGI-approved access method for computing resources that VM management cloud services must expose. ooi is the recommended software to provide this capability.

Installation and configuration of ooi is available at https://github.com/openstack/ooi

EGI Accounting (cASO/SSM)

Every cloud RP should publish utilization data to the EGI accounting database. You will need to install cASO, a pluggable extractor of Cloud Accounting Usage Records from OpenStack.

Documentation on how to install and configure cASO is available at https://caso.readthedocs.org/en/latest/

In order to send the records to the accounting database, you will also need to configure SSM, whose documentation can be found at https://github.com/apel/ssm

EGI Information System (BDII)

Sites must publish information to EGI information system which is based on BDII. There is a common bdii information provider for all cloud management frameworks.

Information on installation and configuration are available at https://github.com/EGI-FCTF/cloud-bdii-provider and in the Fedclouds BDII instructions.

EGI Image Management (vmcatcher, glancepush)

Sites in FedCloud offering VM management capability must give access to VO-endorsed VM images. This functionality is provided with vmcatcher (that is able to subscribe to the image lists available in AppDB) and a set of tools that are able to push the subscribed images into the glance catalog. In order to subscribe to VO-wide image lists, you need to have a valid access token to the AppDB. Check how to access to VO-wide image lists and how to subscribe to a private image list documentation for more information.

Please refer to vmcatcher documentation for installation.

Vmcatcher may be branched to Openstack Glance catalog using python-glancepush tool and Openstack Handler for Vmcatcher event handler. To install and configure glancepush and the handler, you can refer to the following instructions:

- Install the latest release of glancepush from https://appdb.egi.eu/store/software/python.glancepush

- for debian based systems, just download the tarball, extract it, and execute python setup.py install

[stack@ubuntu]$ wget http://repository.egi.eu/community/software/python.glancepush/0.0.X/releases/generic/0.0.6/python-glancepush-0.0.6.tar.gz [stack@ubuntu]$ tar -zxvf python-glancepush-0.0.6.tar.gz [stack@ubuntu]$ python setup.py install

- for RHEL6 you can run:

[stack@rhel]$ yum localinstall http://repository.egi.eu/community/software/python.glancepush/0.0.X/releases/sl/6/x86_64/RPMS/python-glancepush-0.0.6-1.noarch.rpm

- Then, configure glancepush directories

[stack@ubuntu]$ sudo mkdir -p /var/spool/glancepush /etc/glancepush/log /etc/glancepush/transform/ /etc/glancepush/clouds [stack@ubuntu]$ sudo chown stack:stack -R /var/spool/glancepush /etc/glancepush /var/log/glancepush/

- Copy the file /etc/keystone/voms.json to /etc/glancepush/voms.json. Then create a file in clouds file for every VO to which you are subscribed. For example, if you're subscribed to fedcloud, atlas and lhcb, you'll need 3 files in the /etc/glancepush/clouds directory with the credentials for this VO/tenants, for example:

[general] # Tenant for this VO. Must match the tenant defined in voms.json file testing_tenant=egi # Identity service endpoint (Keystone) endpoint_url=https://server4-eupt.unizar.es:5000/v2.0 # User Password password=123456 # User username=John # Set this to true if you're NOT using self-signed certificates is_secure=True # SSH private key that will be used to perform policy checks (to be done) ssh_key=Carlos_lxbifi81 # WARNING: Only define the next variable if you're going to need it. Otherwise you may encounter problems cacert=path_to_your_cert

- Install Openstack handler for vmcatcher. For debian based systems, just download the tarball, extract it and execute python setup.py install

[stack@ubuntu]$ wget http://repository.egi.eu/community/software/openstack.handler.for.vmcatcher/0.0.X/releases/generic/0.0.7/gpvcmupdate-0.0.7.tar.gz [stack@ubuntu]$ tar -zxvf gpvcmupdate-0.0.7.tar.gz [stack@ubuntu]$ python setup.py install

while for RHEL6 you can run:

[stack@rhel]$ yum localinstall http://repository.egi.eu/community/software/openstack.handler.for.vmcatcher/0.0.X/releases/sl/6/x86_64/RPMS/gpvcmupdate-0.0.7-1.noarch.rpm

- Create the vmcatcher folders for OpenStack

[stack@ubuntu]$ mkdir -p /opt/stack/vmcatcher/cache /opt/stack/vmcatcher/cache/partial /opt/stack/vmcatcher/cache/expired

- Check that vmcatcher is running properly by listing and subscribing to an image list

[stack@ubuntu]$ export VMCATCHER_RDBMS="sqlite:////opt/stack/vmcatcher/vmcatcher.db" [stack@ubuntu]$ vmcatcher_subscribe -l [stack@ubuntu]$ vmcatcher_subscribe -e -s https://vmcaster.appdb.egi.eu/store/vappliance/tinycorelinux/image.list [stack@ubuntu]$ vmcatcher_subscribe -l 8ddbd4f6-fb95-4917-b105-c89b5df99dda True None https://vmcaster.appdb.egi.eu/store/vappliance/tinycorelinux/image.list

- Create a CRON wrapper for vmcatcher, named

$HOME/gpvcmupdate/vmcatcher_eventHndl_OS_cron.sh, using the following code

#!/bin/bash

#Cron handler for VMCatcher image syncronization script for OpenStack

#Vmcatcher configuration variables

export VMCATCHER_RDBMS="sqlite:////opt/stack/vmcatcher/vmcatcher.db"

export VMCATCHER_CACHE_DIR_CACHE="/opt/stack/vmcatcher/cache"

export VMCATCHER_CACHE_DIR_DOWNLOAD="/opt/stack/vmcatcher/cache/partial"

export VMCATCHER_CACHE_DIR_EXPIRE="/opt/stack/vmcatcher/cache/expired"

export VMCATCHER_CACHE_EVENT="python $HOME/gpvcmupdate/gpvcmupdate.py -D"

#Update vmcatcher image lists

vmcatcher_subscribe -U

#Add all the new images to the cache

for a in `vmcatcher_image -l | awk '{if ($2==2) print $1}'`; do

vmcatcher_image -a -u $a

done

#Update the cache

vmcatcher_cache -v -v

#Run glancepush

/usr/bin/glancepush.py

- Set the newly created file as executable

[stack@ubuntu]$ chmod +x $HOME/gpvcmupdate/vmcatcher_eventHndl_OS_cron.sh

- Test that the vmcatcher handler is working correctly by running

[stack@ubuntu]$ $HOME/gpvcmupdate/vmcatcher_eventHndl_OS_cron.sh INFO:main:Defaulting actions as 'expire', and 'download'. DEBUG:Events:event 'ProcessPrefix' executed 'python /opt/stack/gpvcmupdate/gpvcmupdate.py' DEBUG:Events:stdout= DEBUG:Events:stderr=Ignoring ProcessPrefix event. INFO:DownloadDir:Downloading '541b01a8-94bd-4545-83a8-6ea07209b440'. DEBUG:Events:event 'AvailablePrefix' executed 'python /opt/stack/gpvcmupdate/gpvcmupdate.py' DEBUG:Events:stdout=AvailablePrefix DEBUG:Events:stderr= INFO:CacheMan:moved file 541b01a8-94bd-4545-83a8-6ea07209b440 DEBUG:Events:event 'AvailablePostfix' executed 'python /opt/stack/gpvcmupdate/gpvcmupdate.py' DEBUG:Events:stdout=AvailablePostfixCreating Metadata Files DEBUG:Events:stderr= DEBUG:Events:event 'ProcessPostfix' executed 'python /opt/stack/gpvcmupdate/gpvcmupdate.py' DEBUG:Events:stdout= DEBUG:Events:stderr=Ignoring ProcessPostfix event.

- Add the following line to the stack user crontab:

50 */6 * * * $HOME/gpvcmupdate/vmcatcher_eventHndl_OS_cron.sh >> /var/log/glancepush/vmcatcher.log 2>&1

NOTES:

- It is recommended to execute glancepush and vmcatcher_cache as stack or other non-root user.

- VMcatcher expired images are removed from OS.

Post-installation

After the installation of all the needed components, it is recommended to set the following policies on Nova to avoid users accessing other users resources:

[root@egi-cloud]# sed -i 's|"admin_or_owner": "is_admin:True or project_id:%(project_id)s",|"admin_or_owner": "is_admin:True or project_id:%(project_id)s",\n "admin_or_user": "is_admin:True or user_id:%(user_id)s",|g' /etc/nova/policy.json [root@egi-cloud]# sed -i 's|"default": "rule:admin_or_owner",|"default": "rule:admin_or_user",|g' /etc/nova/policy.json [root@egi-cloud]# sed -i 's|"compute:get_all": "",|"compute:get": "rule:admin_or_owner",\n "compute:get_all": "",|g' /etc/nova/policy.json

Registration of services in GOCDB

As mentioned in the main page, RP services must be registered in the EGI Configuration Management Database (GOCDB). If you are creating a new site for your cloud services, please follow the Resource Centre Registration and Certification with the help of EGI Operations and your reference Resource Infrastructure.

If offering OCCI interface, sites should register the following services:

- eu.egi.cloud.vm-management.occi for the OCCI endpoint offered by the site. Please note the special endpoint URL syntax described at GOCDB usage in FedCloud

- eu.egi.cloud.accounting (host should be your OCCI machine)

- eu.egi.cloud.vm-metadata.vmcatcher (also host is your OCCI machine)

- Site should also declare the following properties using the Site Extension Properties feature:

- Max number of virtual cores for VM with parameter name:

cloud_max_cores4VM - Max amount of RAM for VM with parameter name:

cloud_max_RAM4VMusing the format: value+unit, e.g. "16GB". - Max amount of storage that could be mounted in a VM with parameter name:

cloud_max_storage4VMusing the format: value+unit, e.g. "16GB".

- Max number of virtual cores for VM with parameter name:

If offering CDMI interface, site should register:

- eu.egi.cloud.storage-management.cdmi Note also the enpoint URL syntax described at GOCDB usage in FedCloud

Once the site services are registered in GOCDB and set as monitored they will appear in the EGI service monitoring tools. EGI will take care of the status of the services as reported in the Infrastructure Status page.

Installation Validation

You can check your installation following these steps:

- Check in Cloudmon that your services are listed and are passing the tests. If all the tests are OK, your installation is already in good shape.

- Check that you are publishing cloud information in your site BDII:

ldapsearch -x -h <site bdii host> -p 2170 -b Glue2GroupID=cloud,Glue2DomainID=<your site name>,o=glue - Check that all the images listed in the AppDB page for fedlcoud.egi.eu VO are listed in your BDII. This sample query will return all the VM ids registered in your BDII:

ldapsearch -x -h <site bdii host> -p 2170 -b Glue2GroupID=cloud,Glue2DomainID=<your site name>,o=glue objectClass=GLUE2ApplicationEnvironment GLUE2ApplicationEnvironmentRepository - Try to start one of those images in your cloud (you can do it with nova or OCCI) commands, the result should be the same.

- Execute the site certification manual tests against your endpoints.

- Check in the accounting portal that your site is listed and the values reported look consistent with the usage of your site.

OpenNebula

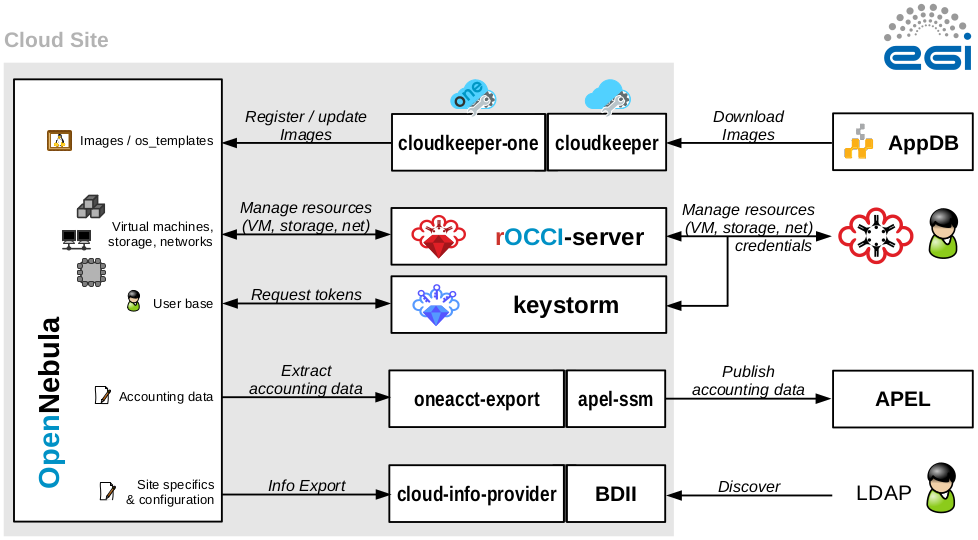

OpenNebula FedCloud Site Architecture

Components

EGI Cloud Site based on OpenNebula is an ordinary OpenNebula installation with some EGI-specific integration components. There are no additional requirements placed on internal site architecture.

|

|

The following components must be installed alongside OpenNebula:

|

Note: CDMI storage endpoints are currently not supported for OpenNebula-based sites.

Note 2: OpenNebula Sunstone is not required!

Open Ports

The following ports must be open to allow access to an OpenNebula-based FedCloud sites:

| Port | Application | Host | Note |

|---|---|---|---|

| 22/TCP | SSH | OpenNebula Server Node | onetools, Perun scripts

|

| 2170/TCP | BDII/LDAP | BDDI Node (typically the OpenNebula Server Node) | EGI Service Discovery |

| 11443/TCP | OCCI/HTTPs | rOCCI-server node (typically the OpenNebula Server Node but can be located elsewhere) | OCCI cloud resource management |

By nature, open ports cannot be specified for OpenNebula hosts, which are used to run virtual machines. Their requirements for open ports cannot be known beforehand.

Service Accounts

This is an overview of service accounts used in an OpenNebula-based FedCloud site. The names are default and can be changed if required.

| Type | Account name | Host | Use |

|---|---|---|---|

| System accounts | oneadmin

|

OpenNebula Server | Default management account in OpenNebula. Also used by the Perun scripts, which access the account with SSH. |

rocci

|

rOCCI-server host (typically OpenNebula server) | Apache application processes for the rOCCI-server. It is only a service account, no access required. | |

apel

|

OpenNebula server | Service account used to run APEL export scripts. Just a service account, no access required. | |

openldap

|

OpenNebula server | Service account used to run LDAP for BDII. Just a service account, no access required. | |

| OpenNebula accounts | rocci

|

OpenNebula Server | Used by the rOCCI-server to perform tasks through the OpenNebula API. |

OpenNebula Installation

Follow OpenNebula Documentation and install OpenNebula with enabled X.509 authentication support.

The following OpenNebula versions are supported:

- OpenNebula v4.4.x (legacy)

- OpenNebula v4.6.x

- OpenNebula v4.8.x

- OpenNebula v4.10.x

- OpenNebula v4.12.x

- OpenNebula v4.14.x

OpenNebula Integration

Integration Prerequisites

- Working OpenNebula installation with X.509 support enabled. Resource Providers are encouraged to follow the step-by-step configuration guide provided by OpenNebula developers. There is no need to change authentication driver for the oneadmin user or create any user accounts manually at this time.

- Valid IGTF-trusted host certificates for selected hosts.

EGI Virtual Machine Management Interface -- OCCI

See rOCCI-server Installation Guide.

EGI User Management/AAI

rOCCI-server + VOMS

- Configure OpenNebula's x509 auth, modify /etc/one/auth/x509_auth.conf file:

# Path to the trusted CA directory. It should contain the trusted CA's for # the server, each CA certificate shoud be name CA_hash.0 :ca_dir: "/etc/grid-security/certificates"

For more information have a look at the official OpenNebula documentation [1]

- rOCCI-server

Example VHOST configuration file for Apache2 with only VOMS authentication enabled:

<VirtualHost *:11443>

# if you wish to change the default Ruby used to run this app

PassengerRuby /opt/occi-server/embedded/bin/ruby

# enable SSL

SSLEngine on

# for security reasons you may restrict the SSL protocol, but some clients may fail if SSLv2 is not supported

SSLProtocol All -SSLv2 -SSLv3

# this should point to your server host certificate

SSLCertificateFile /etc/grid-security/hostcert.pem

# this should point to your server host key

SSLCertificateKeyFile /etc/grid-security/hostkey.pem

# directory containing the Root CA certificates and their hashes

SSLCACertificatePath /etc/grid-security/certificates

# directory containing CRLs

SSLCARevocationPath /etc/grid-security/certificates

# set to optional, this tells Apache to attempt to verify SSL certificates if provided

# for X.509 access with GridSite/VOMS, however, set to 'require'

#SSLVerifyClient optional

SSLVerifyClient require

# if you have multiple CAs in the file above, you may need to increase the verify depht

SSLVerifyDepth 10

# enable passing of SSL variables to passenger. For GridSite/VOMS, enable also exporting certificate data

SSLOptions +StdEnvVars +ExportCertData

# configure OpenSSL inside rOCCI-server to validate peer certificates (for CMFs)

#SetEnv SSL_CERT_FILE /path/to/ca_bundle.crt

SetEnv SSL_CERT_DIR /etc/grid-security/certificates

# set RackEnv

RackEnv production

LogLevel info

ServerName occi.host.example.org

# important, this needs to point to the public folder of your rOCCI-server

DocumentRoot /opt/occi-server/embedded/app/rOCCI-server/public

<Directory /opt/occi-server/embedded/app/rOCCI-server/public>

## variables (and is needed for gridsite-admin.cgi to work.)

GridSiteEnvs on

## Nice GridSite directory listings (without truncating file names!)

GridSiteIndexes off

## If this is greater than zero, we will accept GSI Proxies for clients

## (full client certificates - eg inside web browsers - are always ok)

GridSiteGSIProxyLimit 4

## This directive allows authorized people to write/delete files

## from non-browser clients - eg with htcp(1)

GridSiteMethods ""

Allow from all

Options -MultiViews

</Directory>

# configuration for Passenger

PassengerUser rocci

PassengerGroup rocci

PassengerMinInstances 3

PassengerFriendlyErrorPages off

# configuration for rOCCI-server

## common

SetEnv ROCCI_SERVER_LOG_DIR /var/log/occi-server

SetEnv ROCCI_SERVER_ETC_DIR /etc/occi-server

SetEnv ROCCI_SERVER_PROTOCOL https

SetEnv ROCCI_SERVER_HOSTNAME occi.host.example.org

SetEnv ROCCI_SERVER_PORT 11443

SetEnv ROCCI_SERVER_AUTHN_STRATEGIES "voms"

SetEnv ROCCI_SERVER_HOOKS oneuser_autocreate

SetEnv ROCCI_SERVER_BACKEND opennebula

SetEnv ROCCI_SERVER_LOG_LEVEL info

SetEnv ROCCI_SERVER_LOG_REQUESTS_IN_DEBUG no

SetEnv ROCCI_SERVER_TMP /tmp/occi_server

SetEnv ROCCI_SERVER_MEMCACHES localhost:11211

## experimental

SetEnv ROCCI_SERVER_ALLOW_EXPERIMENTAL_MIMES no

## authN configuration

SetEnv ROCCI_SERVER_AUTHN_VOMS_ROBOT_SUBPROXY_IDENTITY_ENABLE no

## hooks

#SetEnv ROCCI_SERVER_USER_BLACKLIST_HOOK_USER_BLACKLIST "/path/to/yml/file.yml"

#SetEnv ROCCI_SERVER_USER_BLACKLIST_HOOK_FILTERED_STRATEGIES "voms x509 basic"

SetEnv ROCCI_SERVER_ONEUSER_AUTOCREATE_HOOK_VO_NAMES "dteam ops"

## ONE backend

SetEnv ROCCI_SERVER_ONE_XMLRPC http://localhost:2633/RPC2

SetEnv ROCCI_SERVER_ONE_USER rocci

SetEnv ROCCI_SERVER_ONE_PASSWD yourincrediblylonganddifficulttoguesspassword

</VirtualHost>

It is strongly recommended to set SSLVerifyClient require and SetEnv ROCCI_SERVER_AUTHN_STRATEGIES "voms"!

- Support for EGI VOs: VOMS configuration

- Create empty groups fedcloud.egi.eu, ops and dteam in OpenNebula.

Perun integration

The current rOCCI-server implementation doesn’t handle user management and identity propagation hence integration with a third-party service is necessary. The Perun VO management server developed and maintained by CESNET is used to provide user management capabilities for OpenNebula Resource Providers. It uses locally installed scripts (fully under the control of the Resource Provider in question) to propagate changes in the user pool to all registered Resource Providers. They are required to install and configure (if need be) these scripts and report back to EGI Cloud Federation for registration in Perun. Installation and configuration details are available online in the EGI-FCTF/fctf-perun github repository.

Remember that Perun requires SSH access to your machine, so that it can invoke the scripts and push user account changes to your site!

Manual account management

If you want to use X.509/VOMS authentication for your users, you need to create users in OpenNebula with the X.509 driver. For a user named 'johnsmith' from the fedcloud.egi.eu VO the command may look like this

$ oneuser create johnsmith "/DC=es/DC=irisgrid/O=cesga/CN=johnsmith/VO=fedcloud.egi.eu/Role=NULL/Capability=NULL" --driver x509

- And its properties:

$ oneuser update <id_x509_user> X509_DN="/DC=es/DC=irisgrid/O=cesga/CN=johnsmith"

EGI Accounting

See OpenNebula Accounting Scripts.

EGI Information System

Sites must publish information to EGI information system which is based on BDII. There is a common bdii provider for all cloud management frameworks. Information on installation and configuration is available in the cloud-bdii-provider README.md and in the Fedclouds BDII instructions, there is a specific section with OpenNebula details.

EGI Image Management

The current version of this integration component requires manual intervention from the site administrator when a new appliance/image is registered (NOT on subsequent updates). The site administrator must manually create a Virtual Machine Template and, in this template, reference the image in question by IMAGE and IMAGE_UNAME. This is a temporary workaround and will be removed in the next release of the vmcatcher integration component.

Sites in FedCloud offering VM management capability must give access to VO-endorsed VM images. This functionality is provided with vmcatcher (that is able to subscribe to the image lists available in AppDB) and a set of tools that are able to push the subscribed images into the glance catalog. In order to subscribe to VO-wide image lists, you need to have a valid access token to the AppDB. Check how to access to VO-wide image lists and how to subscribe to a private image list documentation for more information.

Please refer to vmcatcher documentation for installation.

vmcatcher_eventHndlExpl_ON is a VMcatcher event handler for OpenNebula to store or disable images based on VMcatcher response. The followign guide will show how to install and configure vmCatcher handler as oneadmin user, directly from github. The configuration will automatically syncronize OpenNebula Image datastore with the registered vmcatcher images.

- Install pre-requisites for VMCatcher handler

[oneadmin@one-sandbox] sudo yum install -y qemu-img

- Install VMcatcher handler from github

[oneadmin@one-sandbox]$ mkdir $HOME/vmcatcher_eventHndlExpl_ON [oneadmin@one-sandbox]$ cd $HOME/vmcatcher_eventHndlExpl_ON [oneadmin@one-sandbox]$ wget http://github.com/grid-admin/vmcatcher_eventHndlExpl_ON/archive/v0.0.8.zip -O vmcatcher_eventHndlExpl_ON.zip [oneadmin@one-sandbox]$ unzip vmcatcher_eventHndlExpl_ON.zip [oneadmin@one-sandbox]$ mv vmcatcher_eventHndlExpl_ON*/* ./ [oneadmin@one-sandbox]$ rmdir vmcatcher_eventHndlExpl_ON-*

- Create the vmcatcher folders for ON (do not use /var/lib/one/ or other OpenNebula default directories for the vmcatcher cache, since you cannot import images into OpenNebula from these directories. Also, since this directory will host a copy of all the images downloaded via vmcatcher, it is suggested to place the directory into a separate disk)

[oneadmin@one-sandbox]$ sudo mkdir -p /opt/vmcatcher-ON/cache /opt/vmcatcher-ON/cache/partial /opt/vmcatcher-ON/cache/expired /opt/vmcatcher-ON/cache/templates [oneadmin@one-sandbox]$ sudo chown oneadmin:oneadmin -R /opt/vmcatcher-ON

- Check that vmcatcher is running properly by listing and subscribing to an image list

[oneadmin@one-sandbox]$ export VMCATCHER_RDBMS="sqlite:////opt/vmcatcher-ON/vmcatcher.db" [oneadmin@one-sandbox]$ vmcatcher_subscribe -l [oneadmin@one-sandbox]$ vmcatcher_subscribe -e -s https://vmcaster.appdb.egi.eu/store/vappliance/tinycorelinux/image.list [oneadmin@one-sandbox]$ vmcatcher_subscribe -l 8ddbd4f6-fb95-4917-b105-c89b5df99dda True None https://vmcaster.appdb.egi.eu/store/vappliance/tinycorelinux/image.list

- Create a CRON wrapper for vmcatcher, named

/var/lib/one/vmcatcher_eventHndlExpl_ON/vmcatcher_eventHndl_ON_cron.sh, using the following code

#!/bin/bash

#Cron handler for VMCatcher image syncronization script for OpenNebula

#Vmcatcher configuration variables

export VMCATCHER_RDBMS="sqlite:////opt/vmcatcher-ON/vmcatcher.db"

export VMCATCHER_CACHE_DIR_CACHE="/opt/vmcatcher-ON/cache"

export VMCATCHER_CACHE_DIR_DOWNLOAD="/opt/vmcatcher-ON/cache/partial"

export VMCATCHER_CACHE_DIR_EXPIRE="/opt/vmcatcher-ON/cache/expired"

export VMCATCHER_CACHE_EVENT="python $HOME/vmcatcher_eventHndlExpl_ON/vmcatcher_eventHndl_ON"

#Update vmcatcher image lists

vmcatcher_subscribe -U

#Add all the new images to the cache

for a in `vmcatcher_image -l | awk '{if ($2==2) print $1}'`; do

vmcatcher_image -a -u $a

done

#Update the cache

vmcatcher_cache -v -v

- Test that the vmcatcher handler is working correctly by running

[oneadmin@one-sandbox]$ chmod +x $HOME/vmcatcher_eventHndlExpl_ON/vmcatcher_eventHndl_ON_cron.sh [oneadmin@one-sandbox]$ $HOME/vmcatcher_eventHndlExpl_ON/vmcatcher_eventHndl_ON_cron.sh INFO:main:Defaulting actions as 'expire', and 'download'. DEBUG:Events:event 'ProcessPrefix' executed 'python /var/lib/one/vmcatcher_eventHndlExpl_ON/vmcatcher_eventHndl_ON' DEBUG:Events:stdout= DEBUG:Events:stderr=2014-07-16 12:25:49,586; DEBUG; vmcatcher_eventHndl_ON; main -- Processing event 'ProcessPrefix' 2014-07-16 12:25:49,586; WARNING; vmcatcher_eventHndl_ON; main -- Ignoring event 'ProcessPrefix' INFO:DownloadDir:Downloading '541b01a8-94bd-4545-83a8-6ea07209b440'. DEBUG:Events:event 'AvailablePrefix' executed 'python /var/lib/one/vmcatcher_eventHndlExpl_ON/vmcatcher_eventHndl_ON' DEBUG:Events:stdout= DEBUG:Events:stderr=2014-07-16 12:26:00,522; DEBUG; vmcatcher_eventHndl_ON; main -- Processing event 'AvailablePrefix' 2014-07-16 12:26:00,522; WARNING; vmcatcher_eventHndl_ON; main -- Ignoring event 'AvailablePrefix' INFO:CacheMan:moved file 541b01a8-94bd-4545-83a8-6ea07209b440 DEBUG:Events:event 'AvailablePostfix' executed 'python /var/lib/one/vmcatcher_eventHndlExpl_ON/vmcatcher_eventHndl_ON' DEBUG:Events:stdout= DEBUG:Events:stderr=2014-07-16 12:26:00,567; DEBUG; vmcatcher_eventHndl_ON; main -- Processing event 'AvailablePostfix' 2014-07-16 12:26:00,567; DEBUG; vmcatcher_eventHndl_ON; HandleAvailablePostfix -- Starting HandleAvailablePostfix for '541b01a8-94bd-4545-83a8-6ea07209b440' 2014-07-16 12:26:00,571; INFO; vmcatcher_eventHndl_ON; UntarFile -- /opt/vmcatcher-ON/cache/541b01a8-94bd-4545-83a8-6ea07209b440 is an OVA file. Extracting files... 2014-07-16 12:26:00,599; INFO; vmcatcher_eventHndl_ON; UntarFile -- Converting /opt/vmcatcher-ON/cache/templates/541b01a8-94bd-4545-83a8-6ea07209b440/CoreLinux-disk1.vmdk to raw format. 2014-07-16 12:26:00,641; INFO; vmcatcher_eventHndl_ON; UntarFile -- New RAW image created: /opt/vmcatcher-ON/cache/templates/541b01a8-94bd-4545-83a8-6ea07209b440/CoreLinux-disk1.vmdk.raw 2014-07-16 12:26:00,642; INFO; vmcatcher_eventHndl_ON; HandleAvailablePostfix -- Creating template file /opt/vmcatcher-ON/cache/templates/541b01a8-94bd-4545-83a8-6ea07209b440.one 2014-07-16 12:26:00,780; INFO; vmcatcher_eventHndl_ON; getImageListXML -- Getting image list: oneimage list --xml 2014-07-16 12:26:00,784; INFO; vmcatcher_eventHndl_ON; HandleAvailablePostfix -- There is not a previous image with the same UUID in the OpenNebula infrastructure 2014-07-16 12:26:00,785; INFO; vmcatcher_eventHndl_ON; HandleAvailablePostfix -- Instantiating template: oneimage create -d default /opt/vmcatcher-ON/cache/templates/541b01a8-94bd-4545-83a8-6ea07209b440.one | cut -d ':' -f 2 DEBUG:Events:event 'ProcessPostfix' executed 'python /var/lib/one/vmcatcher_eventHndlExpl_ON/vmcatcher_eventHndl_ON' DEBUG:Events:stdout= DEBUG:Events:stderr=2014-07-16 12:26:01,077; DEBUG; vmcatcher_eventHndl_ON; main -- Processing event 'ProcessPostfix' 2014-07-16 12:26:01,077; WARNING; vmcatcher_eventHndl_ON; main -- Ignoring event 'ProcessPostfix'

- Add the following line to the oneadmin user crontab:

50 */6 * * * $HOME/vmcatcher_eventHndlExpl_ON/vmcatcher_eventHndl_ON_cron.sh >> /var/log/vmcatcher.log 2>&1

NOTES:

- vmcatcher_cache must be executed as oneadmin user.

- Environment variables can be used to set default values but the command line options will override any set environment options. Set these env variables for oneadmin user: VMCATCHER_RDBMS, VMCATCHER_CACHE_DIR_CACHE, VMCATCHER_CACHE_DIR_DOWNLOAD, VMCATCHER_CACHE_DIR_EXPIRE and VMCATCHER_CACHE_EVENT.

- vmcatcher_eventHndlExpl_ON generates ON image templates. These templates are available from $VMCATCHER_CACHE_DIR_CACHE/templates (templates nomenclature $VMCATCHER_EVENT_DC_IDENTIFIER.one)

- The new ON images include VMCATCHER_EVENT_DC_IDENTIFIER = <VMCATCHER_UUID> tag. This tag is used to identify Fedcloud VM images.

- VMcatcher expired images are set as disabled by ON. It is up to the RP to remove disabled images or assign the new ones to a specific ON group or user.

Registration of services in GOCDB

Site cloud services must be registered in EGI Configuration Management Database (GOCDB). If you are creating a new site for your cloud services, check the PROC09 Resource Centre Registration and Certification procedure. Services can also coexist within an existing (grid) site.

If offering OCCI interface, sites should register the following services:

- eu.egi.cloud.vm-management.occi for the OCCI endpoint offered by the site. Please note the special endpoint URL syntax described at GOCDB usage in FedCloud

- eu.egi.cloud.accounting (host should be your OCCI machine)

- eu.egi.cloud.vm-metadata.vmcatcher (also host is your OCCI machine)

- Site should also declare the following properties using the Site Extension Properties feature:

- Max number of virtual cores for VM with parameter name:

cloud_max_cores4VM - Max amount of RAM for VM with parameter name:

cloud_max_RAM4VMusing the format: value+unit, e.g. "16GB". - Max amount of storage that could be mounted in a VM with parameter name:

cloud_max_storage4VMusing the format: value+unit, e.g. "16GB".

- Max number of virtual cores for VM with parameter name:

Once the site services are registered in GOCDB and set as monitored they will be checked by the Cloud SAM instance.

Installation Validation

You can check your installation following these steps:

- Check in Cloudmon that your services are listed and are passing the tests. If all the tests are OK, your installation is already in good shape.

- Check that you are publishing cloud information in your site BDII:

ldapsearch -x -h <site bdii host> -p 2170 -b Glue2GroupID=cloud,Glue2DomainID=<your site name>,o=glue - Check that all the images listed in the AppDB page for fedlcoud.egi.eu VO are listed in your BDII. This sample query will return all the template IDs registered in your BDII:

ldapsearch -x -h <site bdii host> -p 2170 -b Glue2GroupID=cloud,Glue2DomainID=<your site name>,o=glue objectClass=GLUE2ApplicationEnvironment GLUE2ApplicationEnvironmentRepository - Try to start one of those images in your cloud. You can do it with `onetemplate instantiate` or OCCI commands, the result should be the same.

- Execute the site certification manual tests against your endpoints.

- Check in the accounting portal that your site is listed and the values reported look consistent with the usage of your site.