EGI Roadmap and Technology

| Technology | Software Component Delivery | Software Provisioning | UMD Middleware | Cloud Middleware Distribution | Containers Distribution | Technology Glossary |

| Software Provisioning menu: | Software Provisioning Process | UMD Release Process | Quality Assurance | UMD | Staged Rollout |

The following describes the EGI Capabilities and the expected implementations from available EGI Technology Provider.

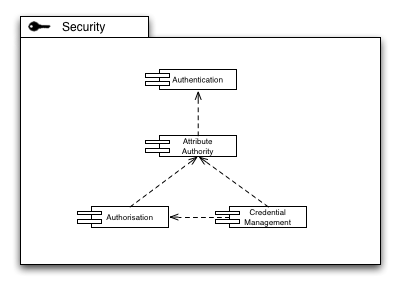

Security

Security capabilities form an important foundation of a distributed production infrastructure, for obvious reasons. The challenge is, however, to carefully model the Security Capabilities so that no unintentional dependencies creep into the architecture, and that a clear boundary definition allows for scalable distribution of Security Capabilities within the production infrastructure.

The establishment of a secure technical identity of an individual across the production infrastructure is a key capability. The acceptance of a set of identity providers (whether federated or centralised as in the current model of X.509 based authentication) is required to lay the basis for an identity/attribute approach to the identification of a user within the infrastructure.

Accepting that a technical identity is not enough to accommodate all use cases for the Grid, an Attribute Authority establishes the concept of roles, or context-based identity of a Grid user: The same user may within one context be an administrator of a site, but at the same time a scientist conducting research using the Grid (as a scientific user) in a different context. Albeit the same technical identity, the roles in both contexts are clearly separated yet securely affixed to the pertinent user identity. In many cases, the Authentication Authority and the Attribute Authority may be identical. However, in federated authentication scenarios the Attribute Authority is in most cases located within the perimeter of one or more VOs the user may be affiliated with.

Based on the attributes securely affixed to an identity, resources and services in the Production Infrastructure must make decisions to allow or deny access, based on the attribute-decorated identity information and any rules stored at that resource or service.

Many use cases of the Grid require the concept of delegation of trust, mostly for procedural purposes, or to comply with policy on a certain site. For those use cases, issuing credentials on demand based on a long-term established identity is a key feature of Credential Management. It is important though that access to on-demand issuing of credentials is guarded through authorisation mechanisms. Interestingly enough, Credential Management itself thus provides at the same time Authentication and Authorisation functionality from a service point of view.

Authentication

An authentication token that is strongly bound to an individual must be applied consistently across the software used within the production infrastructure. The authentication system must be capable of supporting a delegation model.

Irrespective of the actual format, and infrastructure employed, authenticating an individual is a two-step process of first creating and issuing the token, and second to establish the trust in the presented token by cryptographically verifying the integrity against a set of well-defined trust anchors.

Simple authentication infrastructures, such as classic username and password systems often co-locate token issuance and trust establishment in a single conceptual location, for example a username and password file (e.g. “/etc/passwd” in Linux systems) or a distributed replication (e.g. the EGI.eu SSO system).

More complex authentication infrastructures separate the token issuance from establishing the trust in a presented authentication token. A PKI infrastructure as it is employed in the EGI production infrastructure takes the token issuance service completely offline, reducing the actual establishment of trust as a configuration detail: The architecture of the PKI allows a generic implementation of a cryptographically secure verification of a X.509v3-based certificate chain, resulting in a fundamental trust decision (i.e. to trust or not to trust the presented authentication token) based on configuration – as available in the EGI Software Repository.

Supported interfaces

The primary authentication token within the infrastructure is the X.509v3 certificate and its proxy derivatives. Accessing resources through protocols that are secured using SSL or TLS (e.g. plain socket, or https connections) must employ X.509v3 certificates An alternative, standards based Authentication language is SAML 2.0 allowing for more flexible federated authentication solutions geared towards the end-user of the Grid.

OpenID, and Shibboleth are community driven solutions based on SAML, providing federated authentication mechanisms. PKI-based Authentication (and eventually, also Authorisation) is sustainable in slow-changing environments; otherwise the management effort becomes too high.

In an environment, where individual user affiliation with a project, a VO, or an experiment may range from months to years, a reliable prediction of domain-specific AAI requirements is difficult across all current and future user communities.

Attribute Authority

Resources within the production infrastructure are made available to controlled collaborations of users represented in the infrastructure through Virtual Organisations (VOs). Access to a VO is governed by a VO manager who is responsible for managing the addition and removal of users and the assignment of users to groups and roles within the VO.

The main service that any Attribute Authority provides is the issuance of signed tokens that express a subject’s extended information such as VO membership, special roles within an organisational context etc. Typically, an implementation comes with a proprietary administration interface or functionality.

Supported interfaces

Two types of interfaces are required for an interoperable Attribute Authority implementation:

- A language and format containing the actual attribute statement

- An access interface for clients to contact the Attribute Authority service. Administration interfaces are considered an implementation detail.

SAML 2.0 provides for a standardised language to express authoritative statements about a subject’s attributes, particularly in scenarios that employ mechanisms of federated identity. The prevalent means of expressing subject attributes reuse PKI X.509 certificates, standardised in RFC 3281. Currently, there is no standardised access interface known for Attribute Authority services to implement.

Authorisation

The implementation of access control policy – authorisation – needs to take place on many levels. Sites will wish to restrict access to particular VOs and individuals. Sites or VOs may wish to stop certain users accessing particular services. The infrastructure as a whole may need to ban particular users. Policy Enforcement Points (PEPs) will be embedded into many components throughout the infrastructure and will use Policy Decision Points (PDPs) to drive access control decisions.

In a service oriented Grid infrastructure the Policy Decision Point provides the fundamental service to other services, including any number of Policy Enforcement Points. Often a Policy Information Point (PIP) is co-located with a Policy Decision Point, providing human-readable renderings of the technical access policies stored in the PDP.

A number or use cases mandate that implementations must support distributed deployment of the PDP (and perhaps the PIP), for example for performance reasons, or policy management reasons. In such scenarios all PDP services must expose an identical set of access interfaces and information description languages.

Supported interfaces

SAML offers basic Authorisation mechanisms. However, the combination of SAML and XACML from OASIS form a perfect couple for any authorisation needs.

XACML provides clear definitions and scope for PEPs and PDPs, allowing different implementations along those interface definitions deployed in the infrastructure.

Another industry quasi-standard is OAuth , which provides for authentication and authorization for delegated access to specific data. Facebook, amongst others, is a main driver of OAuth

Credential Management

The Credential Management capability provides an interface for obtaining, delegating and renewing authentication credentials by a client using a remote service.

Supported interfaces

One of the key functionalities in this area is the linking of institutional authentication systems to the transparent issuing of certificates for use in the infrastructure through identity federations. This should be provided for community deployment through the use of web portals and web service interfaces.

Being fundamentally a community-driven service, it nonetheless sits on the seam towards the us of the generic production infrastructure and thus depends on the currently EGI-wide deployed authentication AAI infrastructure.

With the drive towards a clearer and stricter separation of Core and Community Capabilities, the technical dependencies of Credential Management implementations will transition to depend on AAI solutions chosen for the respective community domain.

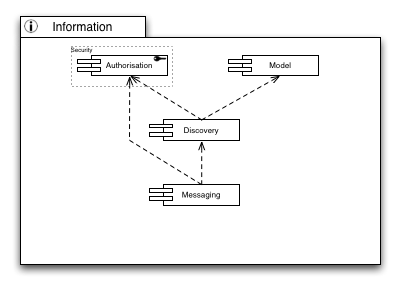

Information

Information is key in distributed infrastructure. Both users and administrators need to know which services are deployed in the infrastructure, which resources are available for consumption or are saturated with compute or storage requests by users, etc.

It is quite obvious that a common language must be available. The Model Capability provides such a language of modelling resources present in the infrastructure, their connections and dependencies, and a common understanding of how to interpret the constructs of language elements to model a given resource.

With a common language at hand, presence and availability discovery of services and resources is possible without ambiguity. Through a well-known “lighthouse” approach in discovery services, users may learn of, or discover, services that may be helpful in solving the user’s needs by querying those information services with search expressions.

Connecting services with each other is the primary use case for a messaging infrastructure in order to serve a set of given inter-service communication patterns. However, to programmatically (or automatically) connect those services with each other, the messaging facilities and channels must be well known – hence they must be discoverable and searchable through the Discovery Capability.

However, not all services or messaging endpoints may be accessible to any user that is generally allowed to access the Grid. Hence proper distributed Authorisation services are required to allow any level of granular access to services deployed in the infrastructure

Information Model

When exchanging information about services, resources, and data a common understanding of the metadata describing such entities is necessary. A common definition of the terms, the syntax, and semantics of basic and complex metadata structures ensures interoperability and integration of systems from different Technology Providers, when exchanging metadata in requests and responses.

Supported interfaces

The information about the resources is described using the GLUE schema from the Open Grid Forum. Currently this is GLUE 1.3 with migration underway to GLUE 2.0.

Information Discovery

Information discovery is a capability that helps find the required resources that have been registered with it within the production infrastructure. The information collected about such resources is made available through well-known instances that provide the data to some logical collection, infrastructure wide, regional, site, domain, etc.

Clients to such service must be able to search, filter, and order the available information until their initial request is satisfied. To enable search and discovery on various levels of the infrastructure it is important to reiterate that any implementation of the Information Discovery Capability must at the same time make use of the Information Model capability defined earlier in this document.

Supported interfaces

The LDAPv3 (RFC 4530) protocol and search syntax is used to query information from the information discovery services and to encapsulate the information payload relating to the services being offered within the production infrastructure that is exchanged between instances.

There are currently no interfaces defined for an interoperable management of the data that is published through this Capability, except for implementation specific interfaces.

However, a desirable integration might re-use implementations of the Messaging Capability to enable scalable dissemination and management of the published information

Messaging

Any kind of distributed computing system faces the challenges of participating services having to communicate with each other. Though many such challenges deal with system design and architecture, common messaging language, protocols and patterns connect the services to each other. If not the only patterns, most commonly used in distributed systems are store-and-forward (JMS calls this point-to-point) and publish-subscribe patterns of using messaging.

Establishing a messaging infrastructure solves many scalability issues commonly found in distributed systems. Within distributed systems, a message ‘bus’ provides a reliable mechanism for data items to be sent between producers and (multiple) consumers. Such a capability, once established, can be reused by many different software services.

Supported interfaces

The Java Message Service (JMS) is the de-facto standard for Java based messaging systems. In its current version 1.1, JMS is used widely in the commercial world even as means for Enterprise Application Integration. Supporting publish-subscribe and point-to-point modes, JMS provides for all messaging patterns in distributed computing. Although not language agnostic, adapters for programming languages other than Java are readily available through Apache ActiveMQ.

Emerging from the financial industry, AMQP provides a standard interface for messaging on the lowest integration layer, the wire layer. The AMQP Working Group aims to develop a messaging protocol that is eventually standardised and stewarded by a recognised SDO, such as the IETF. AMQP is fully language agnostic, and defines a message format at the byte level, intentionally leaving the payload structure unspecified. Those two features allow indiscriminate implementation for any given programming language, and offers integration and interoperability for any kind of applications, architectures and networks.

Messaging is clearly a Core Capability required for efficient and scalable management of the EGI production infrastructure. Messaging gains popularity in custom software solutions as a means to establish asynchronous communication to gain scalability, or for software components that are otherwise difficult to integrate on the access layer. Therefore Messaging is an emerging capability to be incorporated into User community-sourced solutions.

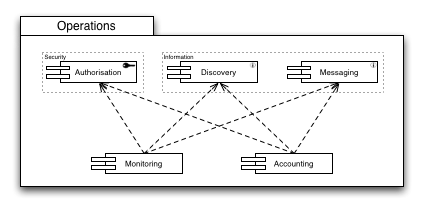

Operations

Maintaining and administering a production infrastructure poses a number of requirements on the deployed components. Monitoring the production infrastructure is a key capability that is necessary to determine in real time the current state of the infrastructure. Resources that are made available for usage are either known or must be discovered, and flexible access control protects resources that, for example, are not available for users that are part of a different infrastructure federation. As the state of the production infrastructure resources is inherently dynamic, changes in a resource’s state (e.g. availability, or load) are propagated through messaging facilities and endpoints.

Similarly, to account for how much of the provided resources are used, the Accounting Capability requires identical subsequent capabilities to provide to the operations community vital information for budget calculations and, eventually, billing facilities.

Monitoring

A production infrastructure is primarily defined by its availability, reliability and security – if its user community cannot rely on it then it is not an infrastructure. All of the resources within the infrastructure need to be monitored for the community to be assured of the quality. Such a monitoring capability is essential for the operational staff attempting to deliver the production infrastructure and the end-users seeking out reliable resources to support their research.

It is important in a service-oriented environment to distinguish between the services that are monitored, and the service that provides monitoring. In EGI all production infrastructure related services (e.g. a compute job submission service) must be monitored for a defined set of parameters (see below).

The service that provides the monitoring capability itself is delivered by EGI through the Service Availability Monitor framework (SAM).

Supported interfaces

The EGI SAM framework provides a monitoring infrastructure that is based on three key cornerstones relevant for this Capability: Nagios and related service probes as the functional monitoring data, the MyEGI portal to visualise and report the monitoring results, and a messing infrastructure (shared with the EGI Accounting infrastructure) based on Apache MQ that ties the monitoring data extraction tool with the MyEGI data presentation layer.

This architecture defines two interfaces, which reside on the monitoring data extraction layer and the monitoring data presentation/querying layer, respectively. The interface on the extraction layer is rather an integration point in that service-specific probes are provided that plug into the Nagios service-monitoring tool. On the monitoring data presentation/query layer, both a programmatic (API) and web based access interface are necessary, but currently not available

Accounting

The use of resources within the e-Infrastructure must be recorded for a number of reasons. From statistical analysis of usage patterns, prediction of resource shortage up billing of the actual use of resources are just some common use cases for the usefulness of accounting data.

Structurally, the Accounting Capability is very similar to the Monitoring Capability in its deployment and ownership of the various components: The Accounting Capability is realised by EGI through the APEL accounting repository and accounting portal. The data that is stored in the repository and visualised through the portal is supplied by the functional services directly, or through functionally orthogonal modules that parse service log files and translate the found information into accounting information.

The accounting information extraction and the central accounting data repository are linked together by an EGI-wide network of messaging brokers.

Supported interfaces

OGF defines a record format for accounting data, Usage Record (UR). It also suggests a draft of an access interface and a format for aggregated accounting records through the Resource Usage Service. Convergence to the UR standard exists only insofar as it is used together with various incompatible extensions for VO support. Based on EMI’s submission of their StAR extension to UR, the OGF UR WG is now standardising accounting records for storage services

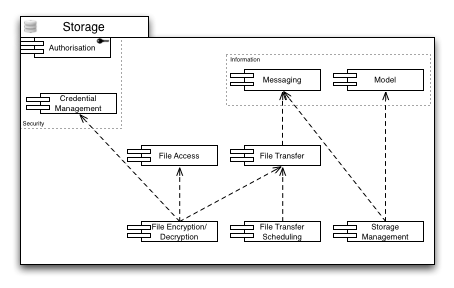

Storage

Storage capabilities are necessary for any kind of long(er) term availability of data, whether raw (e.g. taken directly from an instrument), or digested in any kind of form or shape.

For any Storage Capabilities, Authorisation is necessary to allow access control to the provided resources and services. Hence instead of populating the diagram with a plethora of arrows that disguise the important dependencies in this area, this general prerequisite is symbolised by attaching the Authorisation Capability to the outer boundary of the Storage Capabilities.

Managing Storage resources is an important task in day-to-day Grid business. Storage resources may have to be taken offline, into maintenance, or newly created resources must be set up, and integrated into the infrastructure. Once the management tasks are completed, the changes must be propagated through the infrastructure using the deployed messaging capabilities. Indirectly, this requires access to the Discovery services available in the infrastructure to choose the correct messaging pipelines.

Files on the storage infrastructure are accessed every day in business. Some of them may be encrypted, and need to be decrypted in a seamless way. Credential Management is necessary to delegate the decryption (or encryption) process from the user to the File Access, or the File Transfer service, respectively. At the same time, file transfers consume resources that need to be properly accounted. Hence the resource consumption is properly forwarded to the accounting service through the messaging infrastructure.

File Encryption/Decryption

Sensitive data needs to be stored securely. Before being stored in a remote file store the file may need to be encrypted and then on retrieval de-encrypted before use. The capability should also provide solutions relating to the storage of the keys needed to perform these tasks.

Supported interfaces

There are no standardised interfaces for File Encryption/Decryption, whether seamless, transparent or integrated. The encryption and decryption steps are distinct tasks in a small workflow for a compute job. However, the key-handling interface will be described in future versions of the roadmap following input from the EGI Community

File Access

File Access provides an abstraction of a file resource that may be located remotely on a storage element anywhere in the Grid. The physical nature of the storage element or the storage means are unknown, whether disk array, tape, distributed file system, or else. Access to the file resource includes bulk read and bulk write, block read and write and perhaps striped block read and write.

Supported interfaces

There exist many different standards that are appropriate for File Access implementations. Available standards fall into two categories that differ in their mode of access for the client:

- Interfaces that expose the remote nature of File Access

- Interfaces that integrate with local file system access (and hide the remote nature of the file resource).

The single most widespread interface for local file system based file access is POSIX (Portable Operating System Interface for Unix), defined by IEEE. Many different implementations map POSIX to specific, and proprietary, file systems, such as FAT, VFAT, ext2/3/4, ReiserFS, XFS, DFS, to name but a few. Popular protocols that map remote file resources into the local file system of any given system are CIFS (former SMB), NFS, or btrfs. While those protocols are mainly suited for permanent or semi-permanent access to remote files, FUSE allows elastic, transient and temporary access to remote files without root/Administrator involvement, bridging any suitable remote file access protocol into any user’s home directory (or writable file system areas) for on demand access using common POSIX-compatible local file access methods.

Non-integrated, proprietary File Access interfaces are DCAP, RFIO, and XROOTD, which are all deployed to various degrees in EGI infrastructure. Protocols that were not originally designed for File access but are nonetheless suitable are HTTP(S) (even in parallel) and WebDAV

File Transfer

Files are stored at different physical locations within the production infrastructure and are frequently used at other locations. It is necessary for the files to be efficiently transferred over the international wide area networks linking the different resource centres. Typically, a dedicated service provides the File Transfer capability, offered through potentially many instances of the same service software for scalability reasons.

Supported interfaces

The GridFTP protocol is used extensively in production infrastructures around the world alongside protocols such as http/https that have been developed outside of this community. This protocol provides the functionality to read/write and list data files stored on remote locations.

File Transfer Scheduling

The bandwidth linking resource sites is a resource that needs to be managed in the same way compute resources at a site are accessed through a job scheduler. By being able to schedule wide area data transfers, requests can be prioritised and managed. This would include the capability to monitor and restart transfers as required.

Supported interfaces

The only known standardised interface that allows File Transfer Scheduling is the Data Movement Interface (DMI) developed in OGF. At least two implementations are known that fully implement the interfaces defined within DMI, but no production implementation has been reported.

Storage Management

Storage Management refers to the ability of managing a storage resource, from simple hard disk-based systems to complex hierarchical systems. Typical deployments are to bundle a management interface with the respective local storage element into one service, so that it provides both the File Access, and the Storage Management capability. Alternatively, a dedicated service implementing Storage Management is capable of managing many remote storage nodes.

Supported interfaces

The most commonly used specification is SRM (Storage Resource Management) from the OGF. However, ambiguities in the interface definition and description need to be addressed before unified SRM based management of storage resources can be achieved. Moreover, different implementations, for example from IGE and EMI, vary in adoption of the standard, and a common subset or full adoption must be agreed upon, before interoperability between providers can be achieved.

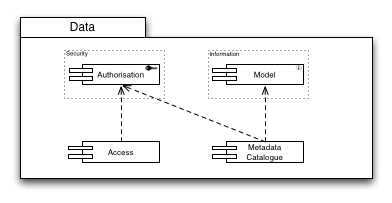

Data

Data Access becomes increasingly important in contemporary distributed computing infrastructures.

Fine-grained access control to distributed data sets is required to protect copyrighted material or data covered by non-open access licenses from unauthorised access.

The same fine-grained access control is necessary to protect searches and indexing activities for data catalogues.

Database Access

Many communities are moving to the use of structured data stored in relational databases. These need to be accessible for controlled use by remote users as any other e-Infrastructure resource.

Supported interfaces

The OGF family of standards developed in the DAIS WG provide standardised access to relational (WS-DAIR) and XML structured data (WS-DAIX).

Metadata Catalogue

The metadata catalogue is used to store and query information relating to the data (files, databases, etc.) stored within the production infrastructure. An integral part of this functionality is not only to query about the existence of a file that may satisfy the needs of the enquiring user, but also the ability to resolve to a concrete description of the location of the file itself.

Typically, metadata catalogues are provided by dedicated service implementations and deployments for scalability reasons, and to provide metadata catalogues that feature different metadata sets for different user communities.

Supported interfaces

To be described in detail in future versions of the roadmap following input from the EGI Community. Functionalities include the ability to store and query information relating to the data item including, location, mapping of persistent storage identifiers to the locations of the stored data.

At the moment, there are no standard interfaces known.

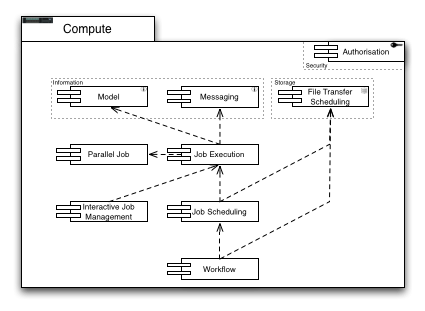

Compute

Capabilities providing access to distributed computing resources are the heart of most distributed computing infrastructures.

Just as Storage capabilities the Compute Capabilities require proper access control mechanisms delivered through generally available implementations of Authorisation.

The Job Execution Capability plays a central role in this group of capabilities. Through messaging usage records are provided for the accounting service, and using a common language for modelling ensures that no ambiguities exist in the execution and accounting of submitted compute jobs. To provide support for parallel job execution, appropriate libraries and job models are necessary. Compute Jobs need to be scheduled to accommodate user constraints such as resource usage, finishing time, and operational constraints such as average resource utilisation and load. Input files and output files need to be transferred from and to a specified storage location, forming already a simple, basic workflow for components to provide. Higher-level workflows include scheduling compute jobs and file transfers, among other domain specific tasks that are not covered in the UMD Roadmap

Job Execution

The Job Execution Capability relates to the ability to describe, submit, manage and monitor a work item on a specific site submitted for either queued batch or interactive execution.

Supported interfaces

There are a number of different proprietary interfaces currently in production use that provide the ability to describe, submit and manage an interactive or batch work item on a specific site. Activity within the Open Grid Forum in recent years has led to specifications in this area: Job Submission Description Language (JSDL), the Basic Execution Service (BES), High Performance Computing Basic Profile (HPC-BP) and HPC File Staging Profile (HPC-FSP) specifications. These specifications and the experiences derived from them are forming the basis of ongoing activity within the OGF Production Grid Infrastructure Working Group. It is expected that the output from this activity will eventually lead to the interfaces that will be supported by EGI.

Parallel Job

The parallel programming paradigm is gaining greater use in the user communities within EGI. The infrastructure does not provide support at the programming level – it is not needed – but provides support for controlling the distribution of processes to physical machines within a cluster. The ability to have fine-grained control over the placement of processes for an MPI or OpenMP application is a key differential between this capability and a conventional batch job capability.

Supported interfaces

To support parallel jobs, three individual issues must be solved: Computing nodes must bear the correct parallel job library (both provider, and specific version), their ability to run parallel jobs using either of the provided libraries must be properly advertised in information systems (hence are discoverable and searchable), and the job submission must actually indicate the use of the parallel job capability.

Existing libraries for parallel job programming are:

- MPI: Message Passing Interface 1.x

- MPI: Message Passing Interface 2.x

- OpenMP

Concerning the Information Model and Discovery, GLUE 2.0 provides the necessary element, i.e. class ApplicationEnvironment, property ParallelSupport.

Submitting parallel jobs is supported in the OGF standard HPC-SPMD, which is an extension to the JSDL 1.0 standard.

Interactive Job Management

For certain use cases of distributed computing interactive access to the running job is necessary. A certain form of communication and control to the running job is required, for example to monitor the job progress or intermediate output in near-real time, or to stop, restart, or even interactively manipulate certain parameters of execution.

Supported interfaces

There are no standardised interfaces to interactive job control known. The most common communication channels used are ssh access to the process environment for normal shell based access to program parameters and processes, or sockets that offer a limited, usually proprietary, command shell to the process itself.

Job Scheduling

Compute Job Scheduling capability refers to the ‘end-to-end’ service that can be delivered to a user in response to their request for a job to be run. This includes managing the selection of the most appropriate resource that meets the user’s requirements, the transfer of any files required as input or produced as output between their source or destination storage location and the selected computational resource, and the management of any data transfer or execution failures within the infrastructure.

Supported interfaces

No standard interfaces for Compute Job Scheduling are known. The OGF DCIFED Working Group is chartered to address this gap, but neither interfaces nor their expected publication dates are known. Meanwhile some existing implementations of Compute Job Schedulers support simple scheduling capabilities described in OGF standards such as BES and JSDL.

The implementations provided for Compute Job Scheduling should use compatible interfaces for the batch compute capability.

Workflow

The ability to define, initiate, manage and monitor a workflow is a key capability across many user communities. Workflows are by nature highly domain-specific even though a number of properties and features are shared between them. However, the various workflow systems may have requirements that need to be supported within EGI’s core infrastructure.

Supported interfaces

EGI does not have a stance on any workflow system, or any standardised access interfaces for workflow engines as long as the core infrastructure defined through the UMD Capabilities, provides sufficient support for domain specific workflow engines. EGI considers workflows, especially those that go beyond simple DAG type of job trees, as a domain-specific feature. While needed across many different domains, convergence on a common set of workflow features and interfaces is seen as very unlikely I the future.

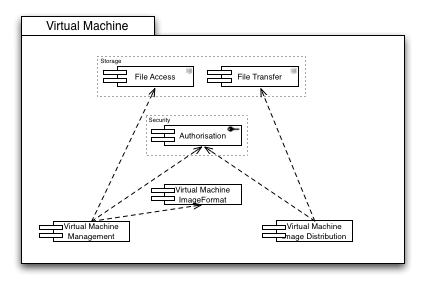

Virtualisation

Virtualisation provides powerful opportunities for alternative approaches to the provisioning of distributed computing resources. Three main capabilities are required to successfully provision virtualised resources.

Central to interoperable Virtualisation is a commonly agreed image format for the virtual machines. The Image Format Capability is therefore of identical paramount importance to Virtualisation as the Information Model Capability for almost all other aspects of a distributed production infrastructure.

Managing virtual machine images requires proper access to the image repositories, which are in turn protected by access control.

Distributing image files requires proper authorisation (e.g. a distribution agent must be authorised to access an image stored in one location to transfer it to a different location to make it available for execution by a different user.) and file transfer facilities to allow the instantiation of the virtual machine in close proximity to the input and output data the contained appliances require.

VM Management

The core functionality is for authorized users to manage the virtual machine life cycle and configuration on a remote site (i.e. start, stop, pause, etc.) Machine images would be selected from a trusted repository at the site that would be configured according to site policy. Together this would allow site managers to determine both who could control the virtual machines running on their sites and who generated the images used on their site.

Supported interfaces

The OCCI WG provides extensive management capabilities for many different kinds of distributed computing management functionality. The Core specification describes the foundation of all OCCI related renderings and extensions. The HTTP Rendering specification describes the RESTful rendering of OCCI-based management is rendered in HTTP communication with various OCCI based resources. Finally, the OCCI Infrastructure specification defines a standardised set of extensions, mix-ins and attributes for infrastructure resources, such as virtual machines (even storage) that allow for standards based Virtual Machine management functionality.

VM Image Format

This capability refers to the ability of portable binary formats for Virtual Machine images that may run on different hypervisors deployed in EGI.

Supported interfaces

The OVF (Open Virtualisation Format) from DMTF provides a standardised format for Virtual Machine Images that may be deployed on many different virtualisation platforms available on the EGI infrastructure. Recently published as an ANSI standard, widespread adoption is nearly guaranteed.

VM Image Distribution

As virtual machine images become the default approach to providing the environment for both jobs and services, increased effort is needed on building the trust model around the distribution of images. Resource providers will need a mechanism for images to be distributed, cached and trusted for execution on their sites.

Supported interfaces

There are no standardised interfaces available for federated distributed VM Image distribution.

Instrumentation

Remote Instrumentation

Instruments are data sources frequently encountered within e-Infrastructures. As part of a distributed computing architecture providing remote access to manage and monitor these instruments is becoming increasingly important within some communities.

Supported Interfaces

There are no standardised interfaces known for the Remote Instrumentation Capability.

Client

Client Tools

Client tools aim to provide an access layer for end-users to efficiently use the Grid infrastructure in a way that integrates either seamlessly, or with minimal effort in the user’s daily work to achieve his or her research. Client tools range from command line clients to more complex graphical user interfaces geared towards the use of the Grid resources directly or indirectly.

Supported interfaces

There are no supported interfaces available or necessary for the Client tools Capability except that Client tools are consumers of other interfaces and APIs provided by the exposed Grid resources, and therefore must be carefully evaluated when changing interfaces at a lower level.

Client API

Instead of addressing interface heterogeneity on the service level, an alternative approach proposes the abstraction of distributed services on the client side, providing a common interface to client application developers. Adopting a client API may protect domain specific application developers from evolving middleware by maintaining compatibility with a client side API for the most common Grid Use Cases rather than keeping track of and synchronising middleware interfaces.

Supported Interfaces

OGF provides the SAGA API specification as an approach to a common, lightweight and simple API for client-side abstraction of distributed computing resource. The SAGA API itself maps semantically very well onto interfaces that are standardised on a lower level of the middleware stack, such as GLUE, BES, DRMAA, GridFTP, and several others. In general, SAGA can be implemented on any DCI that provides (a subset of) SAGA semantic capabilities