Federated Cloud Architecture

| Overview | For users | For resource providers | Infrastructure status | Site-specific configuration | Architecture |

Cloud Interfaces

To federate a cloud system there are several functions for which a common interface must be defined. These are each described below and overall provide the definition of the method by which a ‘user’ of the service would be able to interact.

VM management interface: OCCI

The Open Cloud Computing Interface (OCCI) is a RESTful Protocol and API designed to facilitate interoperable access to, and query of, cloud-based resources across multiple resource providers and heterogeneous environments. The formal specification is maintained and actively worked on by OGF’s OCCI-WG, for details see http://occi-wg.org/. The intended deployment is depicted in Figure 1.

OCCI’s specification consists of three basic elements, each covered in a separate specification document: OCCI Core describes the formal definition of the OCCI Core Model [1]. OCCI HTTP Rendering defines how to interact with the OCCI Core Model using the RESTful OCCI API [2]. The document defines how the OCCI Core Model can be communicated and thus serialised using the HTTP protocol. OCCI Infrastructure contains the definition of the OCCI Infrastructure extension for the IaaS domain [3]. The document defines additional resource types, their attributes and the actions that can be taken on each resource type. Detailed description of the abovementioned elements of the specification is outside the scope of this document. A simplified description is as follows.

OCCI Core defines base types Resource, Link, Action and Mixin. Resource represents all OCCI objects that can be manipulated and used in any conceivable way. In general, it represents provider’s resources such as images (Storage Resource), networks (Network Resource), virtual machines (Compute Resource) or available services. Link represents a base association between two Resource instances; it indicates a generic connection between a source and a target. The most common real world examples are Network Interface and Storage Link connecting Storage and Network Resource to a Compute Resource. Action defines an operation that may be invoked, tied to a specific Resource instance or a collection of Resource instances. In general, Action is designed to perform complex high-level operations changing the state of the chosen Resource such as virtual machine reboot or migration. The concept of mixins is used to facilitate extensibility and provide a way to define provider-specific features.

In the Federated Cloud environment, OCCI is deployed as a variety of platform-specific implementations. An ongoing EGI-InSPIRE mini-project[4] aims to provide a common implementation to further improve interoperability.

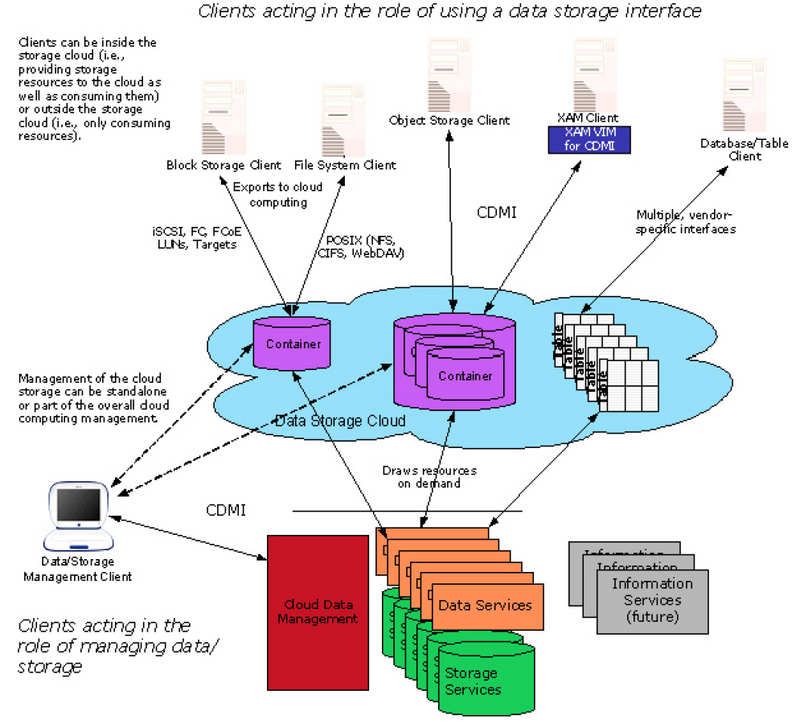

Data management interface: CDMI

The SNIA Cloud Data Management Interface (CDMI) defines a RESTful open standard for operations on storage objects. Semantically the interface is very close to AWS S3 and MS Azure Blob, but is more open and flexible for implementation.

Figure 2 shows the conceptual model of a cloud storage system. CDMI offers clients a way for operating both on a storage management system and single data items. The exact level of support depends on the concrete implementation and is exposed to the client as part of the protocol.

The design of the protocol is aimed both at flexibility and efficiency. Certain heavyweight operations, e.g. blob download, can be performed also with a pure HTTP client to make use of the existing ecosystem of tools. CDMI is built around the concept of Objects, which vary in supported operations and metadata schema. Each Object has an ID, which is unique across all CDMI deployments.

CDMI Objects

There are 4 objects most relevant in the context of EGI’s Federated Cloud:

- Data object: Abstraction for a file with rich metadata.

- Container: Abstraction for a folder. Export to non-HTTP protocols is performed on the container level. Container might have other containers inside of them.

- Capability: Exposes information about a feature set of a certain object.

- Domain: Deployment specific information.

Detection of capabilities

CDMI supports partial implementation of the standards by defining optional features and parameters. In order to discover what functionality is supported by a specific implementation, CDMI client can issue a GET request to a fixed url: /cdmi_capabilities.

Export protocol

Attachment of the storage items to a VM can often be performed more efficiently using protocols like NFS or iSCSI. CDMI supports exposing of this information via container metadata. A client can make use of this information to attach a storage item to a VM over an OCCI protocol.

More information about the CDMI standard can be found at http://cdmi.sniacloud.com/. An on-going EGI-InSPIRE mini-project15 aims to provide an implementation of CDMI, which integrates with OCCI-based infrastructure and supports use-cases needed in a Federated Cloud.

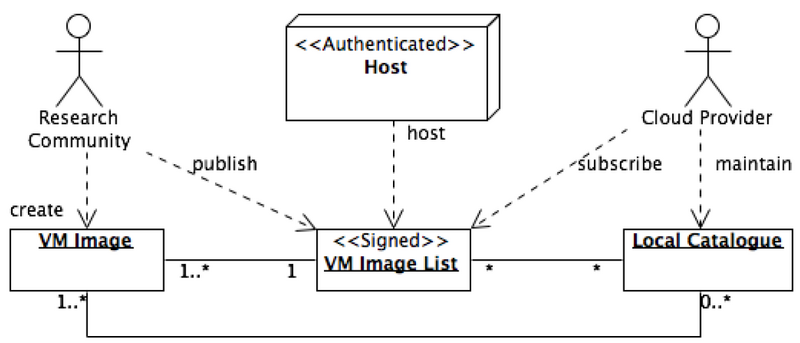

VM Image management

In a distributed, federated Cloud infrastructure, users will often face the situation of efficiently managing and distributing their VM Images across multiple Cloud resource providers. The VM Image management subsystem provides the user with an interface into the EGI Cloud Infrastructure Platform to notify supporting resource providers of the existence of a new or updated VM Image. Sites then examine the provided information, and pending their decision pool the new or updated VM Image locally for instantiation.

This concept introduces a number of capabilities into the EGI Cloud Infrastructure Platform:

- VM Image lifecycle management – Apply best practices of Software Lifecycle Management at scale across EGI

- Automated VM Image distribution – Publish VM images (or updates to existing images) once, while they are automatically distributed to the Cloud resource providers that support the publishing research community with Cloud resources.

- Asynchronous distribution mechanism – Publishing images and pooling these locally are intrinsically decoupled, allowing federated Resource Providers to apply local, specific processes transparently before VM images are available for local instantiation, for example:

- Provider-specific VM image endorsement policies – Not all federated Cloud resource providers will be able to enforce strict perimeter protection in their Cloud infrastructure as risk management to contain potential security incidents related to VM images and instances. Sites may implement a specific VM Image inspection and assessment policy prior to pooling the image for immediate instantiation.

Two command-line tools provide the principal functionality of this subsystem; “vmcaster” to publish VM image lists and “vmcatcher” to subscribe to changes to these lists, respectively.

Research Communities ultimately create and update VM Images (or delegate this functionality). The Images themselves are stored in Appliance repositories that are provided and managed elsewhere, typically by the Research Community itself.

A representative of the Research Community then generates a VM Image list (or updates an existing one) and publishes it on an authenticated host, which is typically using a host certificate signed by a CA included in the EGI Trust Anchor profile.

Federated Clouds Resource Provider then subscribe to changes in VM Image lists by regularly downloading the list from the authenticated host, and comparing it against local copies. New and updated VM Images are downloaded from the appliance repository referenced in the VM Image list into a local staging cache and, where required, made available for further examination and assessment.

Ultimately, Cloud resource Providers will make VM Images available for immediate instantiation by the Research Community.

Core services interfaces

Alongside implementations of cloud specific interfaces it is necessary to enable the connection of these new service types with the core EGI services of Accounting, Monitoring and Service Discovery.

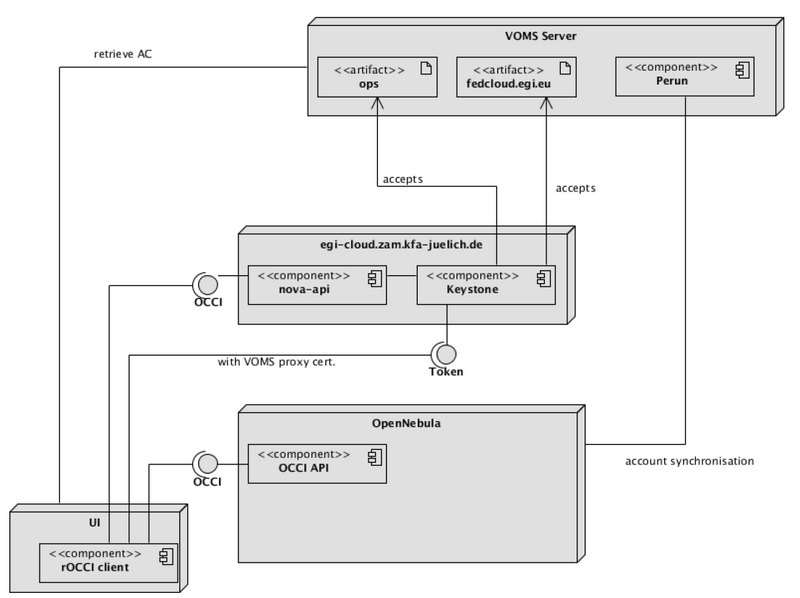

Virtual Organisation Management & AAI: VOMS

Within EGI, research communities are generally identified and, for the purpose of using EGI resources, managed through “Virtual Organisations” (VOs). Naturally, support for VOs is also compulsory for the EGI Cloud Infrastructure Platform. For the purpose of the Federated Cloudstask, a single VO “fedcloud.egi.eu” is used to provide access to the task’s testbed. Additionally, for monitoring purposes, Cloud Resource Providers are required to provide access to the “ops” VO to properly integrate with the EGI Core Infrastructure Platform.

Integration modules are available for each Cloud Management Framework that been developed by the task members. Configuring these modules into a provider’s cloud installation will allow members of these VOs to access the cloud. Figure 3 shows the main components involved. The user retrieves a VOMS attribute certificate from the VOMS server of the desired VO (currently, Perun server for “fedcloud.egi.eu” VO) and thus creates a local VOMS proxy certificate. The VOMS proxy certificate is use in subsequent calls to the OCCI endpoints of OpenNebula or OpenStack using the rOCCI client tool. The rOCCI client directly talks to OpenNebula endpoints, which map the certificate and VO information to local users. Local users need to have been created in advance, which is triggered by regular synchronizations of the OpenNebula installation with Perun.

In order to access an OpenStack OCCI endpoint, the rOCCI client needs to retrieve a Keystone token from OpenStack Keystone first. The retrieval is transparent to the user and automated in the workflow of accessing the OpenStack OCCI endpoint. It is triggered by the OCCI endpoint rejecting invalid requests and sending back an HTTP header referencing the Keystone URL for authentication. Users are generated on the fly in Keystone, it does not need regular synchronization with the VO Management server Perun (see below).

Generic information about how to configure VOMS support for;

- OpenStack Keystone can be found at http://ifca.github.io/keystone-voms/. Information specific to FCTF is located at https://wiki.egi.eu/wiki/Federated_AAI_Configuration#OpenStack.

- OpenNebula, the information can be found here: https://wiki.egi.eu/wiki/Fedcloud-tf:WorkGroups:_Federated_AAI:OpenNebula.

- Stratuslab provides multiple authentication mechanisms at once. They are documented here: http://stratuslab.eu//documentation/2012/10/07/docs-syadmin-auth.html.

Since all of these different technology providers have developed their own systems then the functionality provided by the different services and methodology by which they use VOMS credentials etc. are slightly different.

Information to how to add the support for a new Virtual Organisation on the EGI Federated Cloud can be found here.

Information discovery: BDII

Users and service managers need tools to retrieve information about the whole infrastructure and filter the returned data to select relevant subsets of the infrastructure that fulfil their requirements. To achieve this target the information about the services in the infrastructure must be structured in a uniform schema and published by a common set of services usable both by automatic tools and human users.

At the time of writing the Cloud federation platform is maintaining its own, separate Information discovery system. Even though it is using the GLUE2 schema, some extensions and tweaks are not compatible with the canonical GLUE 2 specification. Therefore, Cloud Resource providers maintain local LDAP endpoints (usually deployed as a resource BDII) aggregated into a Cloud Platform Information Discovery service, which in turn allows access to the data using LDAP v3.

The current standard deployed in EGI for the implementation of the common information system is the Berkeley Database Information Index (BDII). It is software based on a LDAP server, and it is deployed in a hierarchical structure, distributed over the whole infrastructure. The information system is structured in three levels: the grid or cloud services publish their information (e.g. specific capabilities, total and available capacity or user community supported by the service) using an OGF recommended standard format, GLUE2. The current methodology for the publishing of dynamic service data within the EGI the federated activity utilises the same configuration of BDII services as is currently deployed in EGI: The information published by the services is collected by a Site-BDII, a service deployed in almost every site in EGI. The Site-BDIIs are queried by the Top-BDIIs - a national or regional located level of the hierarchy, which contain the information of all the site services available in the infrastructure and their services. NGIs usually provide an authoritative instance of Top-BDII, but every Top-BDII, if properly configured, should contain the same set of information.

Users and tools can use the Top-BDII to look for the services that provide the capabilities and the resources to run their activities. A typical example of Top-BDII query is retrieving the list of services that support a specific user community or VO.

Technical implementation of the federated cloud information system

Currently the Federated Cloud information system is built starting from the resource provider level. Every resource provider is required to deploy a LDAP server publishing the information about their services structured used the GLUE schema. The best technical choice is to go for OpenLDAP, which is available in almost all the *nix machines in the world. On top of that, OpenLDAP is the server used by the gLite BDIIs, therefore it would be easy to use the same configuration files set-up used for the GRIS (Grid Resource Information Service) or the GIIS (Grid Index Information Service).

Cloud services are not yet implementing information providers, therefore the information are published directly by a site-level information provider, comparable to a site-bdii in the structure of the information published. This solution is considered acceptable as the number of cloud services deployed by a single resource provider are usually not as many as the services in grid sites.

The LDIF file to be loaded in the local LDAP server is generated by a prototype custom script, and the information published is the following:

- Cloud computing resources

- Service endpoint

- Capabilities provided by the service, such as: virtual machine management or snapshot taking. The labels that identify the capabilities are agreed within the taskforce.

- Interface, the type of interface – e.g. webservice or webportal – and the interface name and version, for example OCCI 1.2.0

- User authentication and authorization profiles supported by the service, e.g. X.509 certificates

- Virtual machines images made available by the cloud provider

- Operating system, and other environment configuration details

- Maximum number of cores – and physical memory – allocable in a single virtual machine

Clearly, multiple virtual machine types can be associated to a single cloud service. There are no limitations in the number of services published by a resource centre either. Currently the information published is modelled using the latest GLUE2.0 schema definition. An extension of the GLUE schema is under development to address the specific requirements of Cloud resources and add information about storage and network services.

The EGI Federated Cloud Task deploys a central Top-BDII that automatically pulls the information from the local LDAP servers of the resource providers. This service can be used as a single entry point to query for all the resource centres supported by the test-bed, by users or other automatic tools.

Central service registry: GOCDB

EGI’s central service catalogue is used to catalogue the static information of the production infrastructure topology. The service is provided using the GOCDB tool that is developed and deployed within EGI. To allow Resource Providers to expose Cloud resources to the production infrastructure, a number of new service types were added to GODCB:

- eu.egi.cloud.accounting

- eu.egi.cloud.information.bdii

- eu.egi.cloud.storage-management.cdmi

- eu.egi.cloud.vm-management.occi

- eu.egi.cloud.vm-metadata.marketplace

Until such time as EGI is integrating federated Cloud resources into production, all registered Cloud resources are maintained in test-bed mode to protect the production infrastructure from side effects originating from the task’s federated Clouds test-bed.

Monitoring: SAM

The SAM (Service Availability Monitoring) system is a framework consisting of:

- Nagios monitoring system (https://www.nagios.org),

- Custom databases for topology, probes description and storing results of tests

- web interface MyWLCG/MyEGI (https://tomtools.cern.ch/confluence/display/SAM/MyWLCG)

Probes to check functionality and availability of services must be provided by service developers. More information on SAM can be found at https://wiki.egi.eu/wiki/SAM. The current set of probes used for monitoring cloud resources consists of:

- OCCI probe: Creates an instance of a given image by using OCCI and checks its status

- BDII probe: Basic LDAP check tries to connects to cloud BDII

- Accounting probe: Checks if the cloud resource is publishing data to Accounting repository

- TCP checks: Basic TCP checks used for CDMI services.

A central SAM instance specific to the activities of the EGI Federated Clouds Task has been deployed for monitoring test bed (https://cloudmon.egi.eu/nagios). The available probes are in flux and as such once finalized these will be included into official SAM release. Adding probes to official SAM will follow procedure “Adding new probes to SAM” (https://wiki.egi.eu/wiki/PROC07).

Accounting

To account for resource usage across the resource providers the following have been defined:

- The particular elements or values to be accounted for;

- Mechanisms for gathering and publishing accounting data to a central accounting repository;

- How accounting data will be displayed by the EGI Accounting Portal.

The EGI Federated Clouds Task Usage Record is based on the OGF Usage Record format[5], and extends it where necessary. It defines the data elements, which resource providers should send to the central Cloud Accounting repository. These elements are as follows:

| Key | Value | Description | Mandatory |

|---|---|---|---|

| VMUUID | String | Virtual Machine's Universally Unique IDentifier | yes |

| Sitename | String | Sitename, e.g. GOCDB Sitename | yes |

| MachineName | String | VM Id | |

| LocalUserId | String | Local username | |

| LocalGroupId | String | Local groupname | |

| GlobalUserName | String | User's X509 DN | |

| FQAN | String | User's VOMS attributes | |

| Status | String | Completion status - started, completed, suspended | |

| StartTime | int | Must be set if Status = Started (epoch time) | |

| EndTime | int | Must be set if Status = completed (epoch time) | |

| SuspendDuration | int | Set when Status = suspended (seconds) | |

| WallDuration | int | CPU time consumed (seconds) | |

| CpuDuration | int | Set when Status = suspended (seconds) | |

| CpuCount | int | Number of CPUs allocated | |

| NetworkType | string | Description | |

| NetworkInbound | int | GB received | |

| NetworkOutbound | int | GB sent | |

| Memory | int | Memory allocated to the VM (MB) | |

| Disk | int | Disk allocated to the VM (GB) | |

| StorageRecordId | string | Link to associated storage record | |

| ImageId | string | VMCATCHER_EVENT_DC_IDENTIFIER for images registered in AppDB or os_tpl mixin for images not registered in AppDB | |

| CloudType | string | e.g. OpenNebula, Openstack |

Scripts have been provided for OpenNebula and Openstack implementations to retrieve the accounting data required in this format ready to be sent to the Cloud Accounting Repository. These scripts are available from:

- OpenNebula – https://github.com/EGI-FCTF/opennebula-cloudacc

- Openstack – https://github.com/EGI-FCTF/osssm

- StratusLab – identical to OpenNebula

- WNoDeS – internal WNoDeS component

The APEL SSM (Secure STOMP Messenger) package is provided by STFC for resource providers to send their messages to the central accounting repository. It is written in Python and uses the STOMP protocol, the messages contain cloud accounting records as defined above in the following format (example data added) where %% is the record delimiter:

APEL-cloud-message: v0.2 VMUUID: https://cloud.cesga.es:3202/compute/47f74797-e9c9-46d7-b28d-5f87209239eb 2013-02-25 17:37:27+00:00 SiteName: CESGA MachineName: one-2421 LocalUserId: 19 LocalGroupId: 101 GlobalUserName: NULL %% ...another cloud record... %%

The OpenNebula and Openstack scripts produce the messages to be sent using the SSM package in the correct format.

The SSM package can be downloaded from https://github.com/apel/ssm/releases

Detail about configuring SSM and publishing records may be found here: https://wiki.egi.eu/wiki/Fedcloud-tf:WorkGroups:Scenario4#Publishing_Records

SSM utilizes the network of EGI message brokers and is run on both the Cloud Accounting server at STFC and on a client at the Resource Provider site. The SSM running on the Cloud Accounting server receives any messages sent from the Resource Provider SSMs and they are stored in an “incoming” file system.

A Record loader package also runs on the Cloud Accounting server and checks the received messages and inserts the records contained in the message into the MySQL database.

A Cloud Accounting Summary Usage Record has also been defined and the Summaries created on a daily basis from all the accounting records received from the Resource Providers is sent to the EGI Accounting Portal. The EGI Accounting Portal also runs SSM to receive these summaries and the Record loader package to load them in a MySQL database storing the cloud accounting summaries.

The EGI Accounting Portal provides a web page displaying different views of the Cloud Accounting data received from the Resource Providers: http://accounting-devel.egi.eu/cloud.php

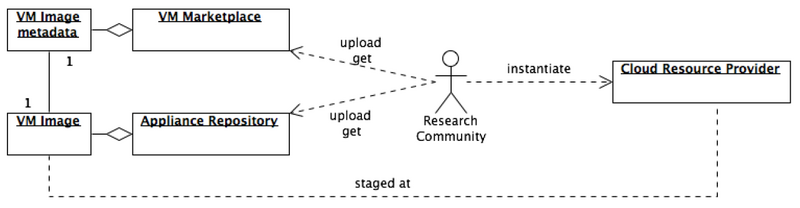

Image metadata publishing & repository

The Task uses the appliance repository and marketplace developed by StratusLab17 as repositories for images and their metadata. IaaS providers endorse images that are suitable for their infrastructure by signing their metadata and uploading them on the marketplace (https://marketplace.egi.eu) and make the image available either to EGI appliance repository (https://appliance-repo.egi.eu) or their local appliance repository. The user is then able to browse the metadata for suitable images to instantiate in one of the federated IaaS.

References

- ↑ R. Nyren, A. Edmonds, A. Papaspyrou, and T. Metsch, “Open Cloud Computing Interface - Core," GFD-P-R.183, April 2011. [Online]. Available: http://ogf.org/documents/GFD.183.pdf

- ↑ T. Metsch and A. Edmonds, “Open Cloud Computing Interface - HTTP Rendering," GFD-P-R.185, April 2011. [Online]. Available: http://ogf.org/documents/GFD.185.pdf

- ↑ “Open Cloud Computing Interface - Infrastructure," GFD-P-R.184, April 2011. [Online]. Available: http://ogf.org/documents/GFD.184.pdf

- ↑ [1]

- ↑ https://wiki.egi.eu/wiki/Fedcloud-tf:Technology:Architecture#Accounting