HBP

| Engagement overview | Community requirements | Community events | Training | EGI Webinars | Documentations |

Community Information

Community Name

Human Brain Project

Community Short Name

HBP

Community Website

http://www.humanbrainproject.eu

Community Description

The aim of the Human Brain Project (HBP) is to accelerate our understanding of the human brain by integrating global neuroscience knowledge and data into supercomputer-based models and simulations. This will be achieved, in part, by engaging the European and global research communities using six collaborative ICT platforms: Neuroinformatics, Brain Simulation, Medical Informatics, High Performance Computing, Neuromorphic Computing and Neurorobotics.

Community Objectives

In the United States, the Brain Research through Advancing Innovative Neurotechnologies (BRAIN) Initiative aims to accelerate the development of new technologies to create large-scale measurements of the structure and function of the brain. The aim is to enable researchers to acquire, analyze and disseminate massive amounts of data about the dynamics nature of the brain from cells to circuits and the whole brain.

For the HBP Neuroinformatics Platform, a key capability is to deliver multi-level brain atlases that enable the analysis and integration of many different types of data into common semantic and spatial coordinate frameworks. Because the data to be integrated is large and widely distributed an infrastructure that enables “in place” visualization and analysis with data services co-located with data storage is requisite. Providing a standard set of services for such large data sets will enhance data sharing and collaboration in neuroscience initiatives around the world.

Main Contact Institutions

Center for Brain Simulation, Campus Biotech chemin des Mines, 9, CH-1202 Geneva, Switzerland

Main Contact

- Sean Hill (sean.hill@humanbrainproject.eu, +41 21 693 96 78)

- Jeff Muller (jeffrey.muller@epfl.ch )

- Catherine Zwahlen (catherine.zwahlen@epfl.ch )

- Stiebrina Dace (dace.stiebrina@epfl.ch, Secretary )

Science Viewpoint

Scientific challenges

Open Data Challenges in HBP

- Not black and white, not just OPEN or CLOSED, need granularity to be explicit about what is open, when and for what purpose, then gradually develop the culture of loosening these restrictions.

- Willing to share data but expensive to produce (intellectual capital-experimental design, acquisition cost, time); many possible uses (multiple research questions) for large datasets; currently, reward currencies are intellectual advances, publications and citations; No clear reward or motivation for providing data completely free of any constraint

- Willing to share data, need to provide incentives for contributions; establish common data use agreements; adopt common metadata, vocabularies, data formats/services; streamlining deployment of infrastructure to data sources (heterogeneous data access methods/ authentication/authorization); deploying data-type specific services attached to repositories

Objectives

Use Story I – Remote interactive multiresolution visualization of large volumetric datasets

Large amounts of image stacks or volumetric data are produced daily at brain research sites around the world. This includes human brain imaging data in clinics, connective data in research studies, whole brain imaging with light-sheet microscopy and tissue clearing methods or micro-optical sectioning techniques, two-photon imaging, array tomography, and electron beam microscopy.

A key challenge in make such data available is to make it accessible without moving large amounts of data. Typical dataset sizes can reach in the terabyte range, while a researcher may want to only view or access a small subset of the entire dataset.

An active repository The ability to easily deploy an active repository that combines large data storage with a set of computational services for accessing and viewing large volume datasets would address a key challenge present in modern neuroscience and across other domains.

Use Story II – Feature extraction and analysis of large volumetric datasets

Neurons are essential building blocks of the brain and key to its information processing ability. The three-dimensional shape of a neuron plays a major role in determining its connectivity, integration of synaptic input and cellular firing properties. Thus, characterization of the 3D morphology of neurons is fundamentally important in neuroscience and related applications. Digitization of the morphology of neurons and other tree-shape biological structures (e.g. glial cells, brain vasculatures) has been studied in the last 30 years. Recent big neuroscience initiatives worldwide, e.g. USA’s BRAIN initiative and Europe’s Human Brain Project, highlight the importance to understand the types of cells in nervous systems. Current reconstruction techniques (both manual and automated) show tremendous variability in the quality and completeness of the resulting morphology. Yet, building a large library of high quality 3D cell morphologies is essential to comprehensively cataloging the types of cells in a nervous system. Furthermore, enabling comparisons of neuron morphologies across species will provide additional sources of insight into neural function.

Automated reconstruction of neuron morphology has been studied by many research groups. Methods including fitting tubes or other geometrical elements, ray casting, spanning tree, shortest paths, deformable curves, pruning, etc., have been proposed. Commercial software packages such as Neurolucida also start to include some of the automated neuron reconstruction methods. The DIADEM Challenge ( http://diademchallenge.org/ ), a worldwide neuron reconstruction contest, was organized in 2010 by several major institutions as a way to stimulate progress and attract new computational researchers to join the technology development community.

A new effort, called BigNeuron (http://www.alleninstitute.org/bigneuron) aims to bring the latest automated neuron morphology reconstruction algorithms to bear on large image stacks from around the world.

The second use tory would entail deploying Vaa3D (www.vaa3d.org) as an additional service to the active repository described in Use Case I. Vaa3D is open source and provides a plugin architecture into which any type of neuron reconstruction algorithm can be adapted. The second use case would require additional computational resources (and could benefit from multithreaded and parallel compute resources) for the reconstruction process.

In this use case, a neuroscientist user would provide via a web service input parameters to a Vaa3D instance which would trace any recognized neuron structures using a selected algorithm. The output file would be returned via the webservice.

Expectations

Use Story I would require:

- A multi-terabyte storage capacity. Each image will typically range from 1-10TB.

- A compute node with fast IO bandwidth to storage device (to be specified shortly)

- The ability to deploy a Python-based service (BBIC, see appendix I) and supporting libraries (HDF5, etc).

- High performance internet connectivity for web service

- A standardized authentication/authorization/identity mechanism (first version could provide public access, current version uses HBP AAI).

- Web client code for interactively viewing dataset via BBIC service (provided by HBP)

- Modern web client (Chrome/Safari/Firefox) for interactive 2D/3D viewing using WebGL and/or OpenLayers.

- Sample neuroscience-based volumetric datasets including electron microscopy, light microscopy, two-photon imaging, light sheet microscopy, etc ranging from subcellular to whole brain – provided by HBP, OpenConnectome, Allen Institute and others.

Use Story II would require:

- The active repository developed for Use Case I.

- The additional deployment of Vaa3D adapted for use with BBIC. A beta version of this is currently available. The REST API may need development.

- A multiprocessor compute node with high speed access to the storage device.

- Additional datasets including image stacks/volumes of clearly labeled single or multiple neurons – provided by HBP, Allen Institute and others.

Impacts and Benefits

Use story 1 would enable a neuroscientist user to deploy their data in a specified repository where it would be accessible for web-based viewing and annotation.

Use story 2 would enable the building a large library of high quality 3D cell morphologies which is essential to comprehensively cataloging the types of cells in a nervous system. Furthermore, enabling comparisons of neuron morphologies across species will provide additional sources of insight into neural function.

Use Cases

UC1: Brain Scan Creation

Actors:

- Brain Researcher working in a group/project performing brain scans. They work for brain research facilities around the world.

- Data Manager working at Active Repository Center, responsible for maintaining data.

Action:

A Scientist is creating a brain scan, which is stored in a form of files. Then the files should be transferred to one of Active Repository Centers and register as well in the central metadata repository. Some metadata are included in the file but most of them are stored in JSON and XML file. The metadata are important for finding the right scan in the global metadata repository. Current metadata of scans are: resolution, species, size of the file, number, etc.

Scans are stored in a form of: series of bitmaps,VTK (for 3d rendering), HDF5, TIFF/JPEG at origin,convert to HDF5 From the data structure point of view a single scan is either file or a directory of files. For the purpose of processing the scans are converted into HDF5 file and then are transferred to Active Repository Centers and then registered in Metadata Brain Center.

Current Solution:

The images are transferred manually to Active Repository Centers where they will be processed later. In many cases FTP protocol is involved. Brain Researchers upload the scans to ftp server. In other cases Brain Researchers give access to the data on their own FTP servers to be downloaded by Data Manager, or in some worst case of the largest data sets scenarios Brain Researches send hard drives with data to be uploaded.

Problems to be solved related to UC1:

- Data flow from Brain Research Facilities to Active Repository Centers.

- Selection of Active Repository Centers to which center the scan should be delivered. There is plan to build multiple processing centers in the world, ideally one per country or more.

- Capacity management, how to maintain grants for storage for different groups of scientists.

- How to replicate data between Active Repository Centers.--> replication is needed when latency is too high eg. China or in some cases west coast in the US. When replicating data, the location of the replicas will be stored in the metadata.

- Metadata management support

- Authentication scheme and authorization scheme for data submitter

Output:

- Sets of directories containing brain scans described by some metadata files in JSON or XML formats

UC2: Remote interactive multiresolution visualization of large volumetric datasets

Actors:

- Brain Researcher there are brain researchers interested in navigating through existing brain scans.

Actions:

Brain Researchers navigate around the brain image using web browser. To make that possible first the Brain Researchers must find a respective scan using central metadata server - this is possible thanks to Metadata Brain Center. Then having direct link to Active Repository Center Brain Researcher is able to dive virtually into the brain thanks to the WebGL standard (→ not hard requirement, graphics acc. not required). But to make that possible Active Repository Center needs to deploy interface which produces WebGL data based on the actual registered image scans. The interface processes data using POSIX api and produces singigicanlty small amout of data in form of HTML+WebGL. The process of generation WebGL data is IO intensive. The experiments showed that one physical server is able to handle max 10 simultaneous viewers. Expected number of simultaneous users still unknown, want to understand how to scale.

→ dynamic load balancing of clients in case of many users accessing the same data set of interest, but this should not be a problem in the short term (2 years)

Current location of the data:

CINECA, Juelich, Oslo, SuperComputer Center in Switzerland, UPM Spain Designated repository per geographical location (the closest). Current size of data collections is: xxx

Problems to be solved related to UC2:

- how to store the data, keep them available through high throughput POSIX interface but still having federated management functionality

- access control to data integrated with AAI based on OpenID (→ to start with AAI can be avoided with open data where auth/authz are not necessary)

- how to maintain data space (space allocation, cpu utilization etc.)

- how to easily deploy Active Repository Centers to make them as many as possible

- how to distribute software for active repository centers. In other words releasing new software for image navigation should lead to updating gracefully all the Active Repository Centers (→ there will be many data producers around the world, intention is to provide easy to use means for them to upload the data)

- if the cloud will be a solution what would be the cost model. (depend on which cloud and which cloud model. )

- Storage QoS taking into account UC3, which might degrade storage performance for UC2 keeping in mind that UC2 is more interactive and UC3 is more batch processing.

UC3: Feature extraction and analysis of large volumetric datasets

Actors:

- Neuroscientist - an actor trying to generate new data based on existing brain scans and register new data into the central metadata server. (Brain researcher is more general term. They have similar access behaviours and access right)

Actions:

From the technical point of view in this usecase neuroscientists process data directly from the repositories and generates new data which should be registered in the global metadata server.

Detailed Description: This use case would entail deploying Vaa3D (www.vaa3d.org) as an additional service to the active repository described in Use Case I. Vaa3D is open source and provides a plugin architecture into which any type of neuron reconstruction algorithm can be adapted. The second use case would require additional computational resources (and could benefit from multithreaded and parallel compute resources) for the reconstruction process.

In this use case, a neuroscientist user would provide via a web service input parameters to a Vaa3D instance which would trace any recognized neuron structures using a selected algorithm. The output file would be returned via the webservice. Output is small and can be transferred on REST interface. No specific hw needed, 4 cores

Problems:

- How to give neuroscientists access to the actual data and provide them in the same time possibility to generate new data. The generated data needs to be stored somewhere keeping in mind limited access and yet registered in the central metadata.

- How to efficiently process the data on cloud or grid infrastructure

data is small

Use case requirements:

- The active repository developed for UC2.

- The additional deployment of Vaa3D (www.vaa3d.com ) adapted for use with BBIC. A beta version of this is currently available. The REST API may need development.

- A multiprocessor compute node with high speed access to the storage device.

- Additional datasets including image stacks/volumes of clearly labeled single or multiple neurons - provided by HBP, Allen Institute and others. (data can be processed at each place, not issue for migration)

- Scalable computing environment.

Output:

Extracted data objects …. keeping full control of the access rights to data owners.

UC4: Publication and citation of data

Actors:

- Data Owner: neuroscientists having administrative access to the data

Actions:

Data Owners should be able to generate persistent citable links to data. It should be work as well with DOI. However by generating citable links data owner will need to take some responsibility to keep that data in the longer perspective. Data owner can define access level to the cited data including anonymous level, by that granting access to anyone having the link. In other cases access control on the cited data should be still possible. Access to the "cited data" should be monitored to gain ability to generate some data access statistics.

In this use case any object could be cited including: original data sets, subvolumes, extracted object, etc.

A challenging issue in this use case might be ability to cite data being an output of a processing services. For instance an actor wants to cite a part of brain scan which is accessible via image navigation services but not necessarily to copy that data.

Problems:

- integration of high throughput data repositories with concepts of persistence and citation of data

- limited access to the features of long term data preservations

- forking of data between data owners to overtake responsibilities of long data preservation

- citing data that have no physical representation but being output of other processing services without storing that. However to achieve long term persistence it might be required to copy the cited data to avoid future problems when the processing service changes and might generate different outputs.

- data deletion policy

UC5: Management of Access Control Rights

Actors:

- Data Owner: neuroscientists having administrative access to the data

Actions:

Human Brain Project maintains its own LDAP repository of users and groups. Based on that there is an OpenID provider which supports identification of the users.

There must be an easy way to maintain ACL rights based on groups membership. Each scan or data object could have an individual ACL rights maintained by the users having respective permissions. → at the moment it is only accessible by authenticated users, including the user processing off-line → data owners decide the access controls

Requirements:

- System should be compatible with OpenID

- ACL

Information Viewpoint

Data

- Represents in vivo, in vitro and in silico entities

- Represents datasets

- Describes properties using ontologies

- Records where an entity or observation is located

- Tracks how data is produced

- Tracks who performed experiments/manipulations

Data size

Each image will typically range from 1-10TB

Data collection size

O(10PB) currently—will grow to O(1000PB) within next 5-10 years

Data format

Brian scans are stored in a form of: series of bitmaps,VTK (for 3d rendering), HDF5, TIFF/JPEG at origin, convert to HDF5 From the data structure point of view a single scan is either file or a directory of files.

Data Identifier

Current system has index to data and to metadata, searching facilities are provided allowing data discovery. Each dataset is associated with a global unique identifier and there are references (URIs) for multiple representations. For example, there is an entry for a unique dataset and its replica URIs, they link to a common GUID in the metadata system.

Standards in use

W3C PROV-DM with HBP extensions

Data locations

CINECA, Juelich, Oslo, SuperComputer Center in Switzerland, UPM Spain (contacts TBD).

Data Management Plan

- Tier 0 - Unrestricted

- All metadata and/or data freely available (includes contributor, specimen details, methods/ protocols, data type, access URL)

- Reward: Potential citation, collaboration

- Tier 1 - Restricted use

- Data available for restricted use, developing analysis algorithms

- Reward: Data citation

- Tier 2 - Restricted Use

- Data available for restricted use, nonconflicting research questions

- Reward: Co-authorship

- Tier 3 - Restricted use

- Full data available for collaborative investigation, joint research questions

- Reward: Collaboration, co-authorship

Privacy policy

Data use agreement

- Sets conditions for data use

- Don’t abuse privileges (e.g. deidentify human data)

- Don’t redistribute, go to approved repository for registered access (maintain data integrity, tracking accesses)

- Agree to acceptable use policies (e.g. investigate non-embargoed questions only)

- Embargo duration

- Share and share-alike - if data combined with others that should be shared, result should be shared

- Commercial use?

- Stakeholders

- Points to research registry for dataset

- Owners can reserve (embargo) data access for specific research questions for limited time period

- Others may access for non-embargoed use

Metadata

The metadata are important for finding the right scan in the global metadata repository. Current metadata of scans are: resolution, species, size of the file, number, etc.

Metadata Identifiers

see: HBP-CORE schema

Metadata size

< 100K per image stack

Metadata format

Some metadata are included in the file but most of them are stored in JSON and XML file.

Standards in use

JSON & XML

Data LifeCycle

- Ingestion:

- Register unique identifiers for each contributor, specimen type, methods/protocols, data types, location, etc

- Mapping metadata for data objects to common HBP data model with provenance info

- Issuing persistent identifiers for data objects in each repository • Data registration REST-API

- Metadata harvesting

- Defining OAI-PMH with common HBP Core data model

- Add entry to KnowledgeGraph – semantic provenance graph

- Curation

- Registering spatial data to common spatial coordinates

- Data feature extraction/quality checks

- Update KnowledgeSpace Ontologies

- Augmenting ontologies for metadata (methods/ protocols/specimens, etc)

- Review article defining concepts w/data links

- Search

- Indexing to enable discovery of related (integrable) data

- Access

Technology Viewpoint

System Architecture

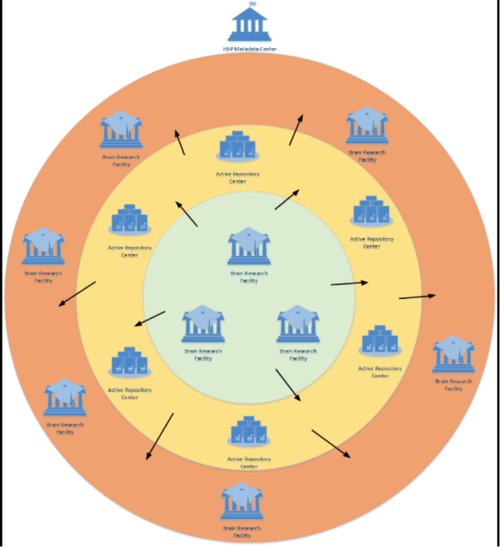

Brain Research Facility. There are large amounts of image stacks or volumetric data are produced daily at brain research sites around the world. This includes human brain imaging data in clinics, connectome data in research studies, whole brain imaging with light-sheet microscopy and tissue clearing methods or micro-optical sectioning techniques, two-photon imaging, array tomography, and electron beam microscopy.

Active Repository Center. The actual data from Brain Research Facility must be stored in data centers having respective storage solutions plus capacity for data processing.

Metadata Brain Center. Maintain the central HBP metadata repository. The repository is a kind of directory for all the available data in Active Repositories and it maintains as well LDAP of HBP members and OpenID interface for authentication.

Community data access protocols

The images are transferred manually to Active Repository Centers where they will be processed later. In many cases FTP protocol is involved. Brain Researchers upload the scans to ftp server.

Data management technology

BBIC (Blue Brain Image Container) (refer to HBP Volume Imaging Service.docx), which manages 2 data types:

- Stacks of images

- 3D volumes

Data access control

The interface processes data using POSIX api and produces significantly small amount of data in form of HTML+WebGL

Public data access protocol

HTTP queries

Public authentication mechanism

Data Owner: neuroscientists having administrative access to the data Current status: Human Brain Project maintains its own LDAP repository of users and groups. Based on that there is an OpenID provider which supports identification of the users. There must be an easy way to maintain ACL rights based on groups membership. Each scan or data object could have an individual ACL rights maintained by the users having respective permissions. → at the moment it is only accessible by authenticated users, including the user processing off-line → data owners decide the access controls

Requirements:

- System should be compatible with OpenID

- ACL

Required Computing Capacities

| CPU | quad-core minimum |

| GPU | N/A |

| RAM | 8G |

| Storage | SDD preferred to HDD if necessary. Also may utilize network filesystem storage, but under the network constraints listed below. |

| Network | Fast IO bandwidth, 1GB/s per node |

| e-Infrastructure | Cloud, as this is a cloud capability test |

| Client | Workstation, laptop, mobile device |

Non-functional requirements

| Performance Requirements | Requirement levels | Description |

|---|---|---|

| Availability | Nomal | process of migration data between active repositories if needed, that might be limited (→ next step requirement) |

| Accessibility | Normal | Simplicity of the access control (UC5) - not that important at the very beginning. |

| Utility | Middle | How difficult distribution/upgrading of the application software onto the environment will be. Docker approach is under HBP investigation now. |

| Reliability | Normal | How difficult distribution/upgrading of the application software onto the environment will be. Docker approach is under HBP investigation now. |

| Scalability | Middle | Scalability of processing load distribution/brokering, it should be easy (maybe automatic), instantiation of new processing units based on the traffic to active repositories. (UC2) |

| Efficiency | High | Simplicity of the process transferring data to the active repository site |

| Effectiveness | High | Simplicity of bringing up another active repository |

| Flexibility | High | Flexibility of accessing the same data by multiple scientists including intra-groups access |

| Decentralisaion | High | Decentralization of resource management. There are many collaborating groups but they remain independent. The system should be flexible to allow independently gain resources by those groups and still provide some integration level. |

| Throughput | High | Request for fast IO bandwidth, 1GB/s per node |

| Response time | High | Request for fast IO bandwidth, 1GB/s per node |

| Security | Normal | AAI provided by HBP, data is non-critical |

| Disaster recovery | Normal | For the testing phase, the primary location of the data will not be on the EGI infrastructure. During the production phase, data integrity guarantees will need to be defined with the customer community. |

Software and applications in use

Software/ applications/services

- BBIC (Blue Brain Image Container, a collection of REST services for providing imaging and meta-information extracted from BBIC files)

- Vaa3D (www.vaa3d.com), which provides a plugin architecture into which any type of neuron reconstruction algorithm can be adapted.

- Software licensing: Open sources

- Configuration: will be handled by HBP

- Dependencies needed to run the application, indicating origin and requirements: will be handled by HBP

Operating system

Any modern Linux (RedHat, Ubuntu distribution have been tested)

Typical processing time

1 day for 4TB image stack, extrapolated from current small scale tests

Runtime libraries/APIs

- BBIC is implemented in Python use Tornado web server; an alternative version use Python’s minimalist SimpleHTTPServer

- REST API

- HCL (Hyperdimensional Compression Library)

Test Software

Requirements for e-Infrastructure

- PERFORMANCE. Being able to work at throughput 1GB/s per node to support 10 users (for image service nodes, throughput between the data server and the storage)

- STORAGE CAPABILITIES

- HDF5 files at size up to 10TB

- Posix access to data

- CO-LOCATION. CPU close to data

Requirements for EGI Testbed Establishments

| Preferences on specific tools and technologies | * Docker approach is currently investigated in HBP \* iRODS is not preferable. |

| Preferences on specific resource providers | Centres with access to PRACE backbones would be good for testing |

| Approximately how much compute and storage capacity and for how long time is needed? | irrelevant |

| Does the user (or those he/she represents) have access to a Certification Authority? | yes |

| Does the user need access to an existing allocation | TBD. |

| Does the user (or those he/she represent) have the resources, time and skills to manage an EGI VO? | No |

| Which NGIs are interested in supporting this case? (Question to the NGIs) | Not currently evaluated |

EGI Contacts

- Project Leader: Tiziana Ferrari, EGI.eu, tiziana.ferrari@egi.eu

- Technology Leader:Lukasz Dutka, Cyfronet, lukasz.dutka@cyfronet.pl

- Requirement Collector: Bartosz Kryza, Cyfronet, bkryza@agh.edu.pl

- Requirement Collector: Yin Chen, EGI.eu, yin.chen@egi.eu

HBP Testbed

The HBP Testbed is composed of resources from a number of Research Institutes:

- Cyfonet (contact: Lukasz Dutka)

HBP:Cyfronet-Site-Setup HBP:Cyfronet-Notes

- DESY (contact: Christian Bernardt)

HBP:DESY-Site-Setup HBP:DESY-Notes

- FZ Juelich (contact: Björn Hagemeier)

HBP:FZJ-Site-Setup HBP:FZJ-Notes

- Bari (contact: Giacinto Donvito)

HBP:Bari-Site-Setup HBP:Bari-Notes

Meeting and minutes

- Second technical discussion meeting for supporting the HBP use cases: VT Federated Data Meeting 16th Jun 2015 meeting minutes

- HBP discussion in EGI CF 2015 Lisbon, Towards an Open Data Cloud session: [1]

- Second Requirement collection meeting with HBP VT Federated Data Meeting 13th May 2015 meeting minutes

- First technical discussion meeting for supporting the HBP use cases VT Federated Data Meeting 7th May 2015 meeting minutes

- First Requirement collection meeting with HBP 21 Apr 2015 meeting page

- EGI/HBP Collaboration Meeting Minutes EGI-Neuroinformatics Indico Pages

Reference

- EGI Document Database Entry for HBP: https://documents.egi.eu/secure/ShowDocument?docid=2468

- Active Repositories - HBP use cases .doc

- Lukasz's presentation on EGI 2015 HBP session .pptx

- Recording of EGI-HBP interaction meeting on 21 April 2015 .arf

- Requirement Extraction for Open Data Platform Project, information is filled in template [2]

- Requirements extraction from the meeting recording .doc

- Sean's keynotes talk on EGI 2015 .pdf

- Sean's presentation on EGI 2015 EGI-HBP discussion session .pdf

- Sean's presentation on EGI 2015 Open Data Cloud session .pdf

- Technique Analysis of the HBP requirements (Lukasz) google doc

- RT tickets for HBP https://rt.egi.eu/rt/Ticket/Display.html?id=8572

- HBP workshop in the 6th RDA plenary, slides and presentations: https://rd-alliance.org/plenary-meetings/sixth-plenary/programme/e-infrastructures-rda-data-intensive-science/infrastructure

- VT Scalable Access to Federated Data: https://wiki.egi.eu/wiki/VT_Federated_Data

- Matthew Viljoen's HBP Testing Status Update (Presented to NILS on 1 Feb 2016) 20160201_hbp_status_update.pptx

- EGI/HBP Pilot Report (by Matthew Viljoen et al. Presented to Sean Hill on 24 Mar 2016) HBP_pilot_report-1.0.pdf

- HBP Testbed Status (by Matthew Viljoen, Presented to EGI CF, Apr 2016) 20160408_egi_testbed_for_hbp.pptx