Competence centre LifeWatch/Citizen Science/Task 4.2

The objective of this task is to develop software able to identify plant's images in order to allow citizens to contribute to flora conservation. Image recognition will be implemented through machine learning with deep neural networks (aka deep learning). Caffe is a deep learning framework created at UC Berkeley.

Caffe installation

Caffe installation in Altamira

For the installation of Caffe in Altamira Supercomputer at IFCA (Spain) we have followed both the official installation guide and a specific guide for installing Caffe on a Supercomputer cluster.

Not having root access to Altamira, Caffe has been installed locally at /gpfs/res_scratch/lifewatch/iheredia/.usr/local/src/caffe. For more information on the local installation of software in a supercomputer please check [1]. The software and libraries already available at Altamira are:

- Python 2.7.10

- CUDA 7.0.28

- OpenMPI 1.8.3

- Boost 1.54.0

- protobuf 2.5.0

- gcc 4.6.3

- HDF5 1.8.10

The remaining libraries have been installed at /gpfs/res_scratch/lifewatch/iheredia/.usr/local/lib. Those libraries are:

- gflags

- glog

- leveldb

- OpenCV

- snappy

- LMDB

- ATLAS

Modules can be loaded all at once by loading /gpfs/res_scratch/lifewatch/iheredia/.usr/local/share/modulefiles/common.

- Comments

At this moment Altamira runs with Tesla M2090 GPUs with CUDA capability 2.0. Therefore Caffe has been compiled without CuDNN (the GPU-accelerated library of primitives for deep neural networks) which requires GPUs with CUDA capability of 3.0 or higher.

Caffe installation in Yong

Having root access, installing Caffe is straightforward in Ubuntu. Yong runs with Nvidia's Quadro 4000 GPU which neither enables CuDNN support. This GPU has a very limited memory which enables training in small simple datasets with small networks (eg. MNIST) but is not capable to store (and therefore train) more complex networks needed to learn more involved datasets (e.g. ImageNet).

Caffe architecture

Neural networks learn their layer's parameters using backpropagation where the gradients of one layer are used to compute the gradients of the previous one (the communication between layers in Caffe is implemented through blobs which store the values and gradients of each layer of the network). Therefore deep networks are very modular and Caffe reflects this modularity by giving you (almost) complete freedom to compose your network's architecture.

There are several types of intermediate layers which perform the computation:

Common layers:

- Inner product

- Splitting

- Flattening

- Reshape

- Concatenation

- Slicing

- Element-wise operations

- Argmax

- Softmax

- Mean Variance Normalization

Vision layers:

- Convolution

- Pooling

- Local Response Normalization

Activation/Neuron layers:

- ReLU

- Leaky ReLU

- Sigmoid

- Tanh

- Absolute Value

- Power

- Binomial Normal LogLikelihood

Activations functions like sigmoids and tanh are not as popular as the used to be. It is usually a safe assumption to choose ReLUs to ensure faster convergence.

Despite the simplicity of constructing a network, it's correct design often involves a considerable amount of expertise. Therefore for beginners it is usually recommended to train their datasets on existing networks (e.g. AlexNet) kindly provided with Caffe.

Training with Caffe

The training can be divided in several steps. The MNIST tutorial and the Imagenet tutorial are good references to follow.

Preparation of the data

First it is necessary to do some image preprocessing: image resizing to a square (e.g. 256x256 for AlexNet) and mean centering (subtracting the mean image of the dataset to each example) which has been seen to lead to a faster convergence. For image resizing in Ubuntu try the command:

for name in /path/to/imagenet/val/*.JPEG; do

convert -resize 256x256\! $name $name

done

Mean centering will be done directly during training. There are other kinds of preprocessing operations (like normalization, decorrelation with PCA and whitening) that are common in machine learning but who have not proven to be useful in image recognition with deep learning. You should also create a test.txt and a val.txt file. Each file is a list with the following structure:

path_to_image1.jpg 0 #label path_to_image2.jpg 0 ... path_to_image100.jpg 56 ...

where the paths should start from your image folder paths. If you intend to build some hierarchical classification like in ImageNet (e.g. labels cobra and boa can be regrouped inside snakes) you might need some additional files (like a .bet.pickle which defines a graph for your dataset). Of course this will prove useful in the classification of flora as species regroup into genus which in turn regroup into families and so on.

The second step is to create the lmdb files (for the TRAIN and TEST sets) which will be fed to Caffe. When efficiency is not critical, images can be fed to Caffe directly from disk, from files in HDF5 or in common image formats. The lmdb files can be created by modifying create_imagenet.sh updating the different paths. Then modify the make_imagenet_mean.sh with your lmdbfile_path. This will generate a mean.prototxt file that will be used later for centering the images.

Caffe doesn't need a validation set because it only optimizes the weights not the hyperparameters (the val.txt acts as a test set). We could in principle optimize the hyperparameters by creating a Python script which loops over the hyperparameters. Caffe will train each time with a specific combination of hyperparameters and test it's accuracy on a validation set (which Caffe considers to be a TEST set) and then selecting the combination of hyperparameters which gives the higher accuracy. However the final real accuracy must be mesured on a completely independent set of images, the real test set (refer to Test Section).

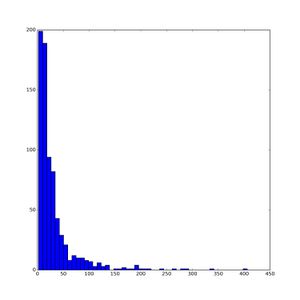

Usually deep networks are trained with very large datasets (e.g. the ImageNet dataset provides around 1000 images per label). In our case the datasets are not that large. For example let's take the Portuguese flora dataset. It includes around 2073 species but most of them have very few images. Therefore the strategy should be to identify the genus instead of the specie. As we can see in Figure 1 most genus still have very few images. From the 747 different genus only 292 of them have at least 25 images. Selecting 5 images for the test set and another 5 for the validation set, we have only 15 images left (in the worst case) for the training set.

A possible way of incrementing the number of training images is to use cross-validation. Cross-validation is a technique consisting in splitting the training set into separate folds and cyclically reusing the validation set to train (so we are able to train with the validation set which proves useful in the case of small datasets). As mentioned before Caffe has no validation process and therefore no built-in function for implementing cross-validation. Once again cross-validation can be manually implemented through a Python script by cyclically exchanging the train and validation sets and each time finetuning the weights starting from the previous iteration's weights (in the first iteration the weights can be fine-tuned from the trained weights of the Oxford 102 flower dataset or any other dataset trained with AlexNet).

Defining the network's architecture

The network's architecture is defined in the train_test.prototxt file. All networks start with a data layer and end with an output layer. Each layer can optionally be labelled with TRAIN or TEST if we want to include them just on the training or the testing phase. For example we will create two data layers labelled TRAIN and TEST each pointing respectively to the train and test lmdb files. Those data layers will also contain the batch size (i.e. the number of images we will process at each iteration) which should be adapted to the GPU memory. Doing parameter update with batches instead of with the full dataset is a much more efficient way to ensure a fast convergence. Of course we will also create two the output layers labelled TRAIN and TEST. The former will output the computed loss in order to start the back propagation while the latter will output the accuracy of the prediction of the batch of test images.

The hyperparameters of the network will be defined in the solver.prototxt file. In the case of the ImageNet model provided by Caffe for the AlexNet we have that:

net: "models/bvlc_reference_caffenet/train_val.prototxt" test_iter: 1000 # number of test iterations = number_of_test_images / test_batch_size test_interval: 1000 # we carry out testing after 1000 training iterations base_lr: 0.01 # base learning rate (lr) lr_policy: "step" # learning rate policy gamma: 0.1 # new_lr = gamma * old_lr stepsize: 100000 # steps after which we update the lr display: 20 # display every 20 iterations max_iter: 450000 # maximum number of iterations momentum: 0.9 # momemtum weight_decay: 0.0005 # regularization parameter snapshot: 10000 # snapshot intermediate results snapshot_prefix: "models/bvlc_reference_caffenet/caffenet_train" solver_mode: GPU # solver mode: CPU or GPU

As mentioned before beginners might want to train their data with some predefined network like this one. Therefore in the train_test.prototxt file we will change the data layer to adapt it to our new image size (or leave it be if the images are also 256x256). The deploy.prototxt file must be copied but remains unchanged. In the solver.prototxt file we just have to change the net_path and optionally the hyperparameters can be changed if we want to do hyperparameter optimization with a validation set as suggested before. One problem of using the AlexNet for training of our Portuguese flora dataset (which is much smaller than ImageNet) is that, due to the high capacity of this net, we might overfit our data. There are two ways of avoiding overfit: either reducing the network size or increasing the regularization (the weight_decay parameter). The latter is usually the best choice because the loss landscape of large networks, in spite of having more local minima, usually achieves a smaller loss. In addition when training on larger networks the variance of the achieved final loss is much smaller that when training of small networks so we rely less on luck of random initialization. The intuition behind it is that it is harder to get stuck in a high dimensional local minimum than in a low dimensional one.

Training

Once we have all the files, train is done by executing the following command:

./build/tools/caffe train --solver=models/bvlc_reference_caffenet/solver.prototxt

Some flags can be added as to do screenshots of the results after some given iterations. This will output a .caffemodel file where the learned weights will be stored.

Testing with PyCaffe

Caffe has a Python module named pycaffe specifically designed for the testing phase. Several ipython notebooks are available as an introduction to this module [2][3].

For the results to be reliable, testing should be done with images who haven't been used neither for training nor validation. Having a test set with the same number of images per label, standard accuracy results are top-1 accuracy (i.e. the predicted label is the right one) and top-5 accuracy (i.e. the right label is among the five first predictions of the network).

- Comments

Once the new GTX 980 Ti GPU will be installed and I will be able to train the Portuguese flora dataset with the AlexNet architecture, I will update the different sections with the results, especially concerning the effectiveness of hyperparameter optimization and cross-validation. In addition I will upload the python code for preparing the dataset and measuring the accuracy of the model.

- Update

Today arrived the new GPU. Good news: it performs x20 faster on the MNIST dataset! Stay tuned for upcoming updates.

An example: The Portuguese Flora dataset

In this section I will present the results obtained for the training of the Portuguese flora dataset. We will train the data with a slightly modified version of the ILSVRC2012 AlexNet, called CaffeNet.

Data preparation

The dataset consists of around 24K images of flora. Each image has a specific structure: flower (close-up), leaf (close-up), fruit (close-up) or habit (wide shot). Around 3K images don't belong to any of these structures and we regroup them in a group called others. This group contains mostly wider shots (the plant among other plants) and some mislabeled flowers. As mentioned before there are 2078 different species (regrouped in 747 genera) with images. As most species contain few images we will try to identify the genus instead. Selecting the genera with at least 25 images leaves us with 288 different classes (genus). The images are 640x480 and have to be reshaped to a square to be fed to Caffe, but what is the optimal size of the square for training? Let's perform a quick check. Leaving 5 images per label for testing we train from scratch (i.e with no pretrained weights for initialization) with the remaining ones. On the one hand if we train with the images resized to 256x256 (the image size of ImageNet and therefore the default image size of our net) we obtain a 24% Top1-Accuracy and 44% Top5-Accuracy. On the other hand training with the full-sized 460x460 images we obtain a 51% Top1-Accuracy and 70% Top5-Accuracy. To understand this different we have to recall how AlexNet works. Instead of using has input the whole image, our net crops a 227x227 subimage (as a form of data augmentation). Therefore the biggest the image the larger the data augmentation. The limitation of the reasoning is that the object to be recognized takes up a substantial amount of the image (as it is the case in ImageNet), the 227x227 crop of a very large image will subsample a meaningless image (for example the wheel in car) which will not be helpful to recognized the full object. But our case is different as the images of contain several flowers or flower and leafs and therefore most times the crops of a large image have valuable information for classification. Therefore, from now on, we will use the full-sized 460x460 images.

Remark On the test phase Caffe no longer uses crops from the test images but resizes those images to 227x227 instead.

Now the last thing before proceed to train is to decide how to split the dataset into training, validation and test sets. For beginners the difference between validation and test sets is that we use the validation set for fine-tuning the hyperparameters (like the regularization strength) to obtain better accuracy results whereas the test set must only used at the very end to report the final accuracy results. For that we will again perform a quick check. Leaving 5 images per label for testing we train we the remaining ones. Then we test the accuracy of the network as a function of the number of images used in the test set. The results are presented in Table 1.

| Test images per label | Top-1 Accuracy | Top-5 Accuracy |

|---|---|---|

| 5 images/label | 0.51 | 0.70 |

| 4 images/label | 0.51 | 0.71 |

| 3 images/label | 0.51 | 0.70 |

| 2 images/label | 0.51 | 0.68 |

| 1 images/label | 0.51 | 0.70 |

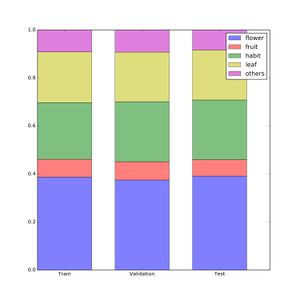

We can obverse that the accuracy results do not vary substantially even with few images per label. The reason is that we have a large enough number of classes so that even in the case of few images per label (where we cannot cover all the structures inside each individual class) we still have a proportional representation of each structure in the overall dataset. What I mean is that the accuracy of each label will vary a lot depending on the number of images per label (because, as we will see, classification accuracy depends a lot on the image's structure) but that the average across classes will remain almost unchanged. Therefore we (arbitrarily) decide to use 2 images/label for the validation set (576) and 3 images/label for the test set (864 images). The remaining 16397 images belong to the training dataset. Being the validation set substantially smaller than the training set, we no longer need to enforce cross-validation (to reuse the validation set for training) so the training step will be simpler (and focus on hyperparameter optimization). As a check Fig.2 shows that the different structures are proportionally distributed inside the three datasets.

Training

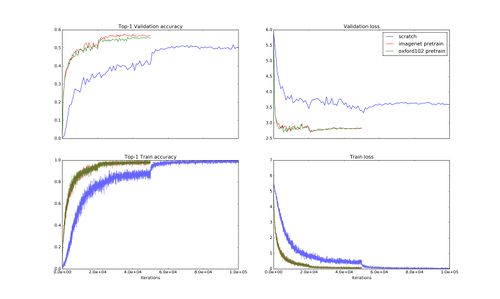

The first thing to know is that by using a well-known net we can benefit from the training other people has done previously. Caffenet architecture mostly consists on 5 convolutional layers with RELU and pooling followed by three fully connected layers. The convolutional layers are the ones responsible for the 'vision' part whereas the fully connected layers 'translate' the convolutional activations in output probabilities. In particular the last fully connected layer is the one who gathers all the information into an N-vector of probabilities (where N is the numbers of labels). So if we wish finetune the pretrained weights for the Caffenet, we can follow Caffe recommendations and decrease the learning for all layers except for the last fully connected layer (since it is the only one who starts training from scratch). This is achieved by decreasing the general learning rate for net from 0.01 to 0.001 and increasing the learning multiplicity of the last layer by a factor of 10 (both for the weights and the biases). In addition we have to change that layer name from "fc8" to "fc8_ptflora" fro example. In Figure 3 we show the results for training in 3 different cases: training the net from scratch (i.e. the weights start at random), finetuning from ImageNet and finally finally finetuning from the Oxford102 dataset. The Oxford dataset contains around 8K images belonging to 102 different flower classes. The pretrained weights oxford102.caffemodel have in turn been finetuned from the imagenet.caffemodel. So we can observe that when finetuning the pretrained models training time is drastically reduced. Not only that but we also reach a higher validation accuracy at the end (i.e. 50% for scratch training and around 56% for the training with pretrained models). This is intuitive because the ImageNet weights are trained on a much larger dataset (i.e. around 1,2M training images in ImageNet) than our scratch net (who just have 15K training images). So the ImageNet convolutional layers can achieve a much finer state (i.e. a smaller optimimum of the loss) because their exploration space has been bigger. We might notice something disturbing in the accuracy results: how come the accuracy of oxford102 is slightly smaller than the ImageNet if oxford102 has been fine-tuned from ImageNet? No worries! This is just an effect of the random inizialiation of the last fully connected layer and the optimization method: Stochastic Gradient Descend (SGD). Optimizing with SGD implies that each pass is performed with a random batch of images so we do follow the exact direction of the loss' gradient but just an approximation. This means that an optimization performed on the same initial parameters do not always give the same result. That is why once the we have tuned the all the hyperparameters, before submitting our net to the competition, we train it several times with the same hyperparameters to smoothen the effect of randomness. The final prediction will be the averaged prediction over this ensemble. This ensemble-averaging method usually enables us to gain as few percent in accuracy.

Remark It must be noticed that oxford102 and ImageNet give very similar results here because we have enough images to retrain the ImageNet (which are more adapted to detect dogs than flower). In flower datasets with fewer training images (for example the orchid/tulip dataset that we shall mention in the future) we expect the oxford102 to give quite better results as their layers are already adapted to flower detection. So from now on, all our models will be fine-tuned from the oxford102.

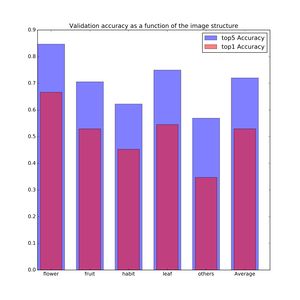

Figure 4 shows a more detailed description of the results of the oxford102 pretrained model. As expected we can see that our net distinguishes very well flowers followed by fruits and leafs (ex aequo), habits and others in the last position. This makes sense as flowers are the most recognizable part of a plant whereas habits make classification much more difficult. Others have even worse results because, in spite of the mislabeled flowers who make accuracy go up, the very wide range photos substantially worsen the accuracy results. To explain this results we should also take into account the information provided by Figure 2. As there are many more training images of flowers it makes sense that the net classifies better flowers at validation time. However this effect might be small compared to the first effect (i.e. flower more recognizable than habit) because as we can observe, in spite there are x3 more leaf images than fruits, the accuracy results of these two categories are very close. For quantifying precisely the importance of the training number effect we can compose a training dataset with the same number of images per structure and see what are the results (this won't be done for the moment as it is not urgent).

Useful links

Computer Vision with Deep Learning

- Stanford Course - Convolutional Neural Networks for Visual Recognition: This is by far the most useful link to introduce yourself to the topic of image recognition with neural networks. It progressively goes from the simplest concepts (SVM, Softmax, 2-layer network, backpropagation) to the key concepts of image recognition with deep learning (convolutional neural networks, ...). It has course notes and recorded lectures on Youtube.

Deep Learning

- Michael Nielsen's webpage: Introductory notes on deep learning.

- Deep Learning Book: Still on preprint but with open access course notes. It is written by one of the leading figure in the field.

Machine Learning

- Pattern Recognition and Machine Learning - C. M. Bishop: Classic reference in machine learning, useful to have the broad view.