Long-tail of science

| Main | EGI.eu operations services | Support | Documentation | Tools | Activities | Performance | Technology | Catch-all Services | Resource Allocation | Security |

Coordinators: Peter Solagna, Gergely Sipos/EGI.eu

Meetings page: http://indico.egi.eu/indico/categoryDisplay.py?categId=36

Mailing list: long-tail-pilot@mailman.egi.eu

Motivation

Processes are well established for several years in EGI to allocate resources for user communities. However individual researchers and small research teams sometimes struggle to access grid and cloud compute and storage resources from the network of NGIs to implement ‘big data applications’. Recognising the need for simpler and more harmonised access for individual researchers and small research groups, aka. members of the ‘long-tail of science’ the EGI community started to design and prototype a new platform in October 2014.

This project designs and prototypes a new e-infrastructure platform in EGI to simplify access to grid and cloud computing services for the long-tail of science, ie. those researchers and small research teams who work with large data, but have limited or no expertise in distributed systems. The project will establish a set of services integrated together and suited for the most frequent grid and cloud computing use cases of individual researchers and small research teams.

Members of the project evaluate technologies that can lower the barrier of access to grid and cloud resources, select the best candidates, and assemble these into a coherent platform. The platform will serve users via a centrally operated 'user registration portal' and a set of science gateways that will be connected to resources in a new Virtual Organisation. Through the registration portal users will be able to request resources from the platform, while through the science gateways they consume the resources by implementing big data and compute-intensive use cases. The platform will be relevant for any scientist who works with large data, moreover it will include communication channels and mechanisms to connect these people to their respective National Grid Initiatives where they can receive more customised services. The platform will be open for grid and cloud resource providers, for science gateway providers, and for user support teams. The project welcomes such contributors.

The expected benefits of the project:

- A single grid and cloud computing resource allocation at the European scale for individual researchers and small research teams.

- Harmonised and simplified access to grid and cloud resources of EGI.

- Custom Virtual Research Environments available for individual researchers and small research teams at the European scale.

- New and improved connection within the NGIs to individual researchers and small research teams.

- An innovative new security subsystem (per-user sub-proxies) that - in the future - may be used in other EGI community platforms.

Mandate

The project has started in the 5th year of the EGI-InSPIRE FP7 project. The target of this activity is to design and prototype the services of the EGI long-tail platform. The services will be deployed in a new Virtual Organisation of EGI. Based on the experiences with this pilot deployment a production version will be prepared by the EGI-Engage project.

Objectives

The capabilities that the long tail of science platform have to support are:

- Zero-barrier access: any user who carries out relevant research can get a start-up resource allocation

- 100% coverage: anyone with internet access can become a user

- User-centric: User support for platform users is available through the NGIs

- Realistic: Reuse existing technology building blocks as much as possible, require minimal new development

- Secure: Provide acceptable level of tracking of users and user activities (Not necessarily f2f vetting)

- Scalable: Can scale up to support large number resource providers, technology providers, use cases and users

- Valuable: Result tangible outcomes

Overview

Slideset about the concept of the EGI long-tail of science platform: [1]

Slideset about the authentication & authorization model adopted: [2]

Members

How to Join

If interested, please contact Peter Solagna peter.solagna@egi.eu

Technical Information

The following table contains the current services and technologies available in the NGIs participating the work group that can be used in the long tail of science platform. The possible types of contribution are:

- User management

- Credential management

- Resources provisioning for the pilot

- ... (please, feel free to add)

| Institution | Type of contribution | Description |

|---|---|---|

| INFN | eTokenServer | The eTokenServer is a standard-based solution developed by INFN for central management of robot certificates and provisioning of proxies to get seamless and secure access to computing e-Infrastructures, based on local, Grid and Cloud middleware supporting the X.509 standard for authorization. The business logic of the servlet has been conceived to provide "resources" (e.g., full-legacy and RFC-3820 proxies) in a "web-manner" which can be consumed by simple users, client applications and by portals and Science Gateways.

Starting from release v2.0.1, the eTokenServer is now able to account users of robot certificates (RFC proxies only). This is key for accounting and auditing usage of e-Infrastructures. |

| The Catania Grid & Cloud Engine | The Catania Grid & Cloud engine offers a seamless access to local HPC, Grid and Cloud infrastructures, exploiting a common set of APIs based on JSAGA.

JSAGA implements the OGF SAGA standard for the Java language and it addresses many different distributed infrastructures through a set of adaptors. Currently, adaptors are available for several middleware (gLite/EMI (Grid), rOCCI (Cloud), ssh (HPC), etc.) while new adaptors can easily be written to target new infrastructures. The Catania Grid & Cloud engine also provides a set of RESTful APIs allowing software developers to access distributed infrastructure from many different web portal engines or even mobile devices, such as smartphones and tablets. It is worth noting that the Catania Grid & Cloud Engine interacts with another module of the CSGF called the User Tracking Database, to ensure compliance with both the EGI VO Portal Policy (https://documents.egi.eu/public/ShowDocument?docid=80) and the EGI Grid Security Traceability and Logging Policy (https://documents.egi.eu/public/ShowDocument?docid=81). | |

| Support to both eduGAIN-compliant and STORK Identity Federations | Liferay plugins have been developed to allow SAML 2.0 based federated authentication. Science Gateways developed with the CSGF can be configured as Service Providers of both eduGAIN-compliant Identity Federation and, since a few weeks, of the STORK Federation (https://www.eid-stork.eu/) promoted by the European Commission as platform for e-ID cross-border trust.

The integration of Science Gateways in the STORK federation has been the result of a joint work carried out by INFN and the Politecnico di Torino and is demonstrated in a short video that can be watched at http://youtu.be/GmYOMn8Lsw4. As of today, no other Science Gateway framework in the world is known to be compatible with STORK-based authentication. Concerning eduGAIN-compliant Identity Federations, INFN operates the GridP “catch-all” federation (http://gridp.garr.it) that includes both “catch-all” Identity Providers (e.g., http://idpopen.garr.it and http://idpsocial.garr.it) for “homeless” users and Identity Providers that do not belong to any official federation (e.g., the EGI SSO). | |

| General purpose Web Portal | INFN has developed a general purpose Grid Portal (IGP), based on Liferay, which provides a web graphical user interface access to Grid job submission, workflow definition and data management. It is also interfaced with external Infrastructure as a Service (IaaS) frameworks for the dynamic provisioning of computing resources. | |

| Authentication service | The CASShib service provides a SAML-based authentication mechanism for the Liferay portal based on the eduGAIN Federation. This service is deployed in a tomcat container, it can optionally run on a separate server machine and provides translation of SAML Assertions (retrieved from a Shibboleth service provider part of the eduGAIN federation) into CAS Assertions. The CAS Assertions can then be used into the Liferay portal for the user authentication and session. | |

| IGP Registration portlet | Based on the Liferay framework, this portlet provides a mechanism to register an authenticated user by retrieving the required information from an IDP (eduGAIN Federation), an X.509 certificate and VOMS. If a valid certificate is not available, the portlet requests a new certificate to an on-line CA (see item 4) on behalf of the user. To improve security and preserve the necessary information, the X.509 certificate is only used to create a proxy and it is then destroyed; the proxy certificate is stored in a dedicated Myproxy server. | |

| On-line CA related services | The on-line CA is a service based on EJBCA (http://www.ejbca.org). This service, for security reasons, runs on a dedicated server in a JBoss container. To access the on-line CA, the registration portlet uses the on-line CA Bridge service deployed in a tomcat container running on a separate machine. The on-line CA Bridge communicates with the on-line CA in a secure way through the EJBCA APIs. When the portal requests a certificate on behalf of the user, the on-line CA Bridge retrieves the user information from the eduGAIN federation using the SSO mechanism through the Shibboleth service running on the server. The on-line CA Bridge also provides a user interface that can be accessed directly by the users via a web browser; in this case the certificate will be installed directly in the browser. | |

| IGP Authorization portlet | Based on the Liferay framework, this portlet allows managing proxy and VOMS extensions for each user. These credentials are then used by the portal for job submission and data management. | |

| IGP Job submission portlets | 1. Workflow submission performed by a subset of WS-PGRADE portlets (workflows creation, submission and management), wrapping them through the gUSE component that can execute acyclic workflows.

2. Simple submission through a portlet that allows users to: build their own JDL; save JDLs as templates for sharing and reusing purposes; show the list of submitted jobs; monitor the job state during its execution; retrieve the output; resubmit an ended job; view log files. 3. The Cloud portlet, allowing access to different Cloud resources (WNoDeS, OpenStack and OpenNebula) by instantiating new virtual machines on the available resources. The portlet can manage the users’ existing ssh keys, or can create a new pair if needed. The portlet interacts with the federated resources through a python command-line interface that simplifies the communication by wrapping the OCCI protocol. | |

| IGP Data Management service | A service to transfer files between Grid and local resources has been designed and integrated in the IGP.

This service allows users to easily upload files to the Grid in two ways: via a web browser for local files, or via an external server using different protocols (http, webdav, ftp, sftp). The data management interface is based on a specific plugin for Pydio tool (http://pyd.io) that interacts with the user, the local storage element and the Grid storage element, and moves files between them. | |

| CYFRONET | User Portal: Registration | User registration is initiated by the user who provides his/her data through an on-line form. E-mail address is confirmed by a validation link. Account application is manually approved by a Vetting Person. Vetting in PL-Grid is done by confirmation of an affiliation of a person with a scientific institute. After this step the user can apply for access to a services offered by infrastructure. |

| User Portal: Getting certificates (integration with on-line CA) | While registered the user can apply for an X.509 certificate from an on-line CA. There is an on-line CA server based on ejbca which issues personal certificates. User can obtain and revoke a certificate. | |

| User Portal: Application for an access to a service. | In order to get access to a service the user must apply for it. There is a Service Administrator role who approves the requests. As a result of positive approval some backend actions are executed. Portal is able to communicate with VOMS, UVOS (UNICORE) and LDAP services. | |

| User Portal: Initial allocation | While registered the user gets an initial allocation. The initial allocation allows users to utilize the service, but only in a limited scope: 1000 compute hours, 40 GB of storage for 6 months. The initial allocation is automatically renewed each half a year. | |

| Open ID service provider. | User database is interfaced with OpenID provider. Other services which need to authenticate/authorize a user can do this through OpenID. User can be authenticated by openID by providing X.509 certificate. Optionally openID service can pass X.509 proxy to the requesting service if user provides a password to a X.509 key. | |

| GRNET | ||

Technical discussion and architecture details

User Management Portal (UMP)

Analysis of the functionalities and architecture

- Registration of the user. Including the form where to provide information about the user's institution, field of research and the purposes of his/her activity in EGI resources.

- The request must be approved by authorized users.

- User registry. The UMP will be a registry of the users who are accessing, or accessed, EGI through the long tail of science platform.

- User authentication

- UMP must support a catch all IdP for the homeless users (use of EGI SSO?)

- Consider in the UMP the possibility to integrate external IdPs.

- The other services of the long tail of science platform should get hthe user information from the UMP. This will ensure that users are associated to uniform identifiers assigned only by the UMP to facilitate accounting and authorization.

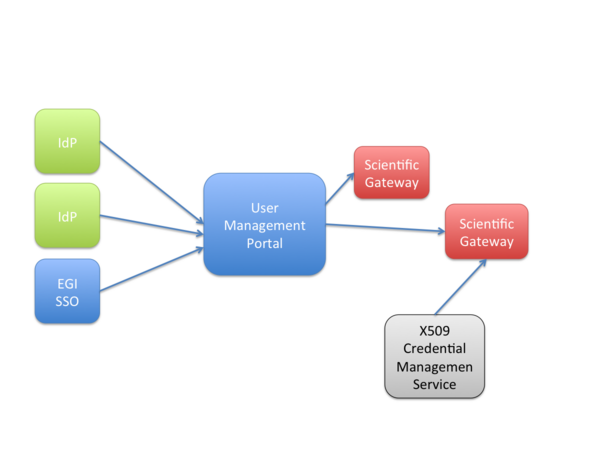

As shown in the following figure, the UMP must act as a service proxy, between science gateways and the identity providers, being them EGI SSO or other IdP (e.g. eduGAIN federations). In this way UMP can control the access to the infrastructure for the long tail of science users. UMP acts as unique IdP for the science gateways.

This architecture also allows the UMP to be the service provider that needs to be authorized by the IdPs.

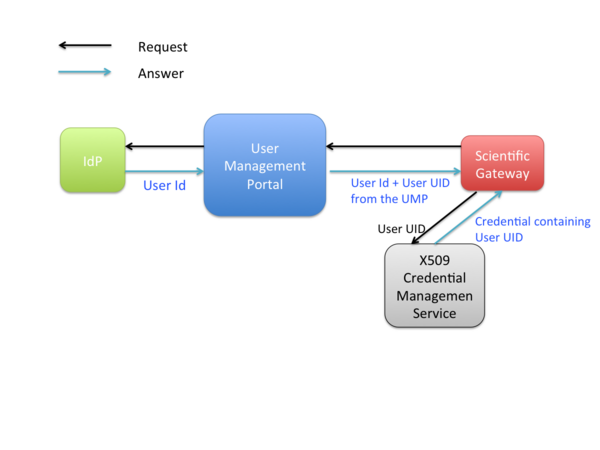

Once the users' request is authorized on the User Management Portal, they are redirected to one or several science gateways where they can run their computational tasks or manage their data on the grid. A possible workflow to access resources could be the following:

- User accesses the Scienge Gateway (SG).

- The SG redirect the request to the UMP.

- The UMP redirect the request to the IdP that holds the credentials of the user (e.g. EGI SSO).

- The User authenticate on his/her IdP.

- The IdP provides the assertion with some attributes about the user to the UMP (e.g. the user email address).

- The UMP answers to the SG adding more attributes including the Unique Identifier that identifies the user in the UMP registry, and that is unique for every user using the LTOS platform.

- The SG uses the UID to ask a credentials that can be univocally associated to the individual user.

- The credential is used to access EGI resources.

Procedure how to register in UNITY as SG

Client service Registration

1. Please send us return URIs

2. UNITY team send Client clientID and secretKey

unity.server.clientSecret= [YOUR SECRET KEY]

Credential services

The user credential service will be based on the per-user sub-proxies (PUSP).

The purpose of a per-user sub-proxy (PUSP) is to allow identification of the individual users that operate using a common robot certificate. A common example is where a web portal (e.g., a scientific gateway) somehow identifies its user and wishes to authenticate as that user when interacting with EGI resources. This is achieved by creating a proxy credential from the robot credential with the proxy certificate containing user-identifying information in its additional proxy CN field. The user-identifying information may be pseudo-anonymised where only the portal knows the actual mapping.

This solution will allow LToS users to access EGI resources through their LToS portal credentials (e.g. EGI SSO, Identity Federations, etc.) without owning a personal grid certificate. This will simplify the access to the infrastructure for the final users.

Policy changes

The long-tail platform requires two new policies:

- An 'Acceptable Use Policy' (AUP) for the Virtual Organisation that will include the compute and storage resources.

- A new security policy that describes the conditions of generating and using user-specific proxies from robot certificates on the long-tail VO

Proposed AUP for the Long-tail VO

This acceptable Use Policy applies to all the users accessing EGI infrastructure services with the Long-tail platform and/or Long-tail Virtual Organization, hereafter referred to as the VO. The Operations Management Board (OMB) is the body that owns and gives authority to this policy.

The goal of the VO is to allow users from the long-tail of science to use EGI services through the Long-tail of science platform.

VO membership is restricted to the users who are registered in the User Management Portal for the long-tail. Every user can use the EGI resources only for activities related to the work and research described in the application submitted to the User Management Portal, and for the amount of resources/time that has been granted to the user through the User Management Portal. User membership can be temporarily suspended in case the user consumed all the resources granted in the application.

During all times at which EGI resources are being utilised the users of the VO agree to be bound by the Grid Acceptable Usage Rules, VO Security Policy and other relevant Grid Policies, and to use EGI only in the furtherance of the stated goals described in the application.

All the users *must* acknowledge the contribution of EGI in the research that used the Infrastructure resources by including the following text in the publications: "This work used the European Grid Infrastructure (EGI)“

Proposed Security Policy for the Long-tail platform

SPG:Drafts:LToS_Service_Scoped_Security_Policy

Accounting of usage

The adoption of the PUSPs allow us to rely on the central EGI accounting system. Extra accounting information could be collected directly from the Science Gateways.

Timeline for deployment

User Management Portal

- Week 24-28.11:

- clarify user-story (input for CYFRNONET) [?]

- Week 1-5.12:

- wireframe draft (?) [CYFRONET]

- (implementation internal [CYFRONET])

- Week 8-12.12

- (implementation internal [CYFRONET])

- Week 15-19.12 portal very early protype [CYFRONET]

- technology framework UNITY (http://www.unity-idm.eu/)

Scientific gateway

The following providers expressed interest in providing science gateways in the long-tail platform: WS-PGRADE, DIRAC, Catania Science Gateway Framework. These providers will be involved in the project as soon as the platform interfaces towards gateways are settled.

User credential service

See the per-user sub-proxies (PUSP) wiki page for more information.

Resources for the long-tail VO

The following providers expressed interest in contributing with resources in the long-tail platform:

- xxx, type of resource [grid/cloud], capacity: xxx

- xxx, type of resource [grid/cloud], capacity: xxx

- xxx, type of resource [grid/cloud], capacity: xxx