Fedcloud-tf:Technology

| Main | Roadmap and Innovation | Technology | For Users | For Resource Providers | Media |

Introduction

The current high throughput model of grid computing has proven to be extremely powerful for a small number of different communities. These communities have thrived in the current grid environment but the uptake of e-infrastructure by other communities has been limited. EGI has therefore strategically decided to investigate how it could broaden the uptake of its infrastructure to support other research communities and application design models, that would not only be able to take advantage of the existing functionality and investment already made in EGI’s Core Infrastructure, but also support different research communities and their applications on the current production infrastructure than it was previously able to.

The utilization of Virtualization and Infrastructure as a Service (IaaS) cloud computing was a clear candidate to enable this transformation. It was also clear that with a number of different open source technologies already in use across a number of different resource providers, that it would not be possible to mandate a single software stack. Instead, following on from a number of different activities already on-going in Europe including SIENA1, an approach that required the utilization of open standards where available and, where not, methods that have broad acceptance in the e-infrastructure community were essential.

This led to the current EGI Cloud Federation model, a model based on a set of agreed open standards. More details on the EGI Cloud Federation Model is the paragraph below.

Federation Model

The federation of IaaS Cloud resources in EGI is built upon the extensive autonomy of Resource Providers in terms of ownership of exposed resources. The current federation model in EGI for exposing Grid resources requires Resource Providers to deploy and operate a specific set of Grid Middleware components before they could be integrated into EGI’s production infrastructure. In contrast, the federation model for distributed IaaS Cloud resources allows a lightweight aggregation of local Cloud resources into the EGI Cloud Infrastructure Platform (CLIP). At the heart of the federation are the locally deployed Cloud Management stacks. In compliance with the Cloud computing model, the EGI CLIP does not mandate deploying any particular or specific Cloud Management stack; it is the responsibility of the Resource Providers to investigate, identify and deploy the solution that fits best their individual needs for as long as the offered services implement the required interfaces and domain languages. These interfaces and domain languages, and the interoperability of their implementation with other solutions are the focus of the federation.

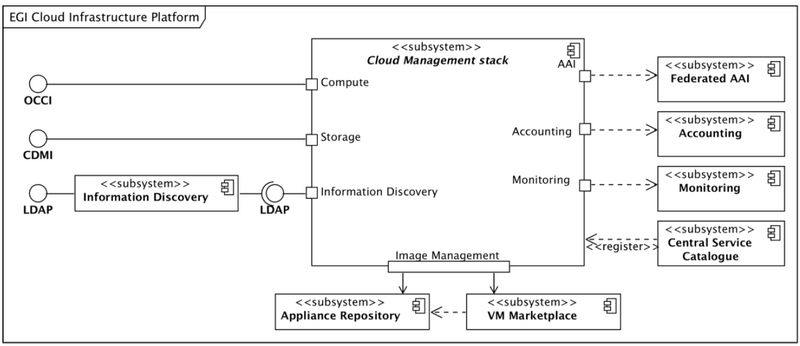

Consequently, the EGI CLIP is modelled around the concept of an abstract Cloud Management stack subsystem that is integrated with components of the EGI Core Infrastructure Platform (See Figure 1)

This architecture allows EGI to define the CLIP as a relatively thin layer of federation and interoperability around local deployments and integrations of Cloud Management stacks. This architecture defines interaction ports with a number of services from the EGI Core Infrastructure Platform, and the EGI Collaboration Platform. At the same time, it defines the required external interfaces and corresponding interaction ports. All these ports will have to be realized by local Cloud Management stack deployments. The main interaction points of Resource Providers must take care of:

- Integrate with the EGI Core Authentication & Authorisation Infrastructure

- Integrate with the EGI Core Accounting system

- Integrate with the EGI Core Monitoring system

- Provide a standardised Cloud Computing management interface (OCCI)

- Provide a standardised Cloud Storage interface (CDMI)

- Provide a standardised interface to an Information Service

EGI-InSPIRE INFSO-RI-261323 © Members of EGI-InSPIRE collaboration PUBLIC 9 / 29 Additionally, by means of using the Appliance Repository and the VM Marketplace from the EGI Collaboration Platform the EGI Cloud Infrastructure Platform is providing VM image sharing and re-use across EGI Research Communities.

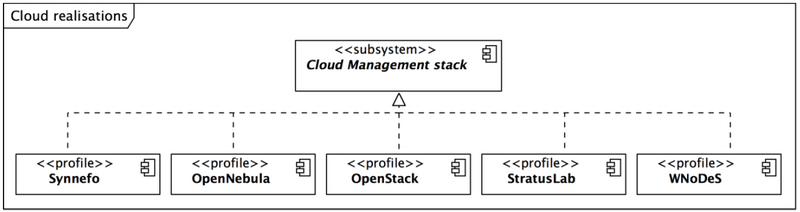

Figure 2 provides an overview of the current realizations of the abstract Cloud Management stack subsystem in the EGI Cloud federation. It illustrates that each existing realisation inherits the obligation to implement the interaction points from the generalized parent Cloud Management stack. At the same time, the EGI Federated Clouds Task (funded through the EGI-InSPIRE project) gives Resource Providers a platform to share their implementation solutions for a commonly deployed specific Cloud Management stack (e.g. OpenNebula and OpenStack). Section 5 is dedicated to the documentation of the steps necessary to integrate a local deployment of a given Cloud Management stack into the EGI Cloud federation.

Through this collaboration, Resource Providers gradually develop and mature deployment and configuration profiles around common Cloud Management stacks as illustrated in Figure 2. Through mutual support Resource Providers begin to build communities around the deployed Cloud Management Frameworks – the result is better integration of the most popular Cloud Management Frameworks in the Federated Clouds Task as illustrated in table below.

| Cloud Mgmt. Stack | Fed. AAI | Monitoring | Accounting | Img. Mgmt. | OCCI | CDMI |

|---|---|---|---|---|---|---|

| OpenStack | Yes | Yes | Yes | Yes | Yes | Yes |

| OpenNebula | Yes | Yes | Yes | Yes | Yes | Yes |

| StratusLab | Yes | Yes | Yes | - | Yes | - |

| WNoDeS | Yes | Yes | Yes | - | - | - |

| Synnefo | Yes | Yes | - | - | Yes | - |

Interfaces and standards

To federate a cloud system there are several functions for which a common interface must be defined. These are each described below and overall provide the definition of the method by which a ‘user’ of the service would be able to interact.

- Management interfaces

- Core services interfaces

FedCloud work groups

The FedCloud Task Force activities are split across work groups. A leader is elected for each work group and members of the Task Force are free to spend their effort in one or more groups. Each work group investigates one or more capabilities that are required by a federation of clouds. The work done is recorded in the group workbench and, eventually, translated into the Task Force blueprint.

With the development of the testbed and of the blueprint, new capabilities will be investigated and addressed. As a consequence, new work groups are added to the Task Force when required.