Applications on Demand Service - architecture

| Applications on Demand Service menu: | Home • | Documentation for providers • | Documentation for developers • | Architecture |

Applications on Demand (AoD) Service Information pages

The EGI Applications on Demand service (AoD) is the EGI’s response to the requirements of researchers who are interested in using applications in a on-demand fashion together with the compute and storage environment needed to compute and store data.

The Service can be accessed through the EGI Marketplace

Service architecture

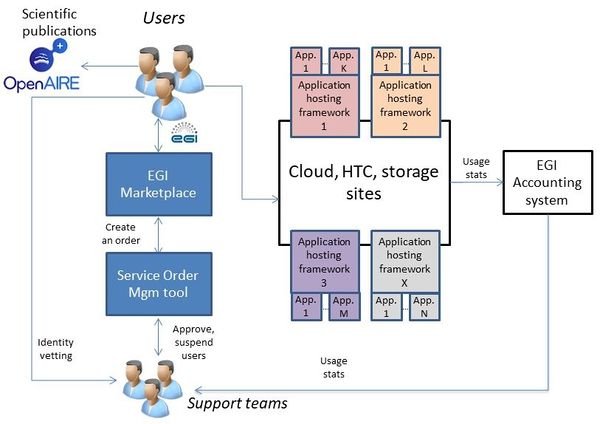

The EGI Applications on Demand service architecture is presented in Figure.

This service architecture is composed by the following components:

- A catch-all VO called ‘vo.access.egi.eu’ and a pre-allocated pool of HTC and cloud resources configured for supporting the EGI Applications on Demand research activities. This resource pool currently includes cloud resources from Italy (INFN-Catania), Spain (CESGA) and France (IN2P3-IRES).

- The X.509 credentials factory service (also called eToken server)[1] is a standard-based solution developed for central management of robot certificates and the provision of Per-User Sub-Proxy (PUSP) certificates. PUSP allows to identify the individual user that operates using a common robot certificate. Permissions to request robot proxy certificates, and contact the server, are granted only to Science Gateways/portal integrated in the Platform and only when the user is authorized.

- A set of Science Gateways/portal to host applications that users can run accessing the EGI Applications on Demand service. Currently the following three frameworks are used: The FutureGateway Science Gateway (FGSG), the WS-PGRADE/gUSE portal and the Elastic Cloud Computing Cluster (EC3).

- The EGI VMOps dashboard provides a Graphical User Interface (GUI) to perform Virtual Machine (VM) management operations on the EGI Federated Cloud. The dashboard introduces a user-friendly environment where users can create and manage VMs along with associated storage devices on any of the EGI Federated Cloud providers. It provides a complete view of the deployed applications and a unified resource management experience, independently of the technology driving each of the resource centres of the federation. Users can create new infrastructure topologies, which include a set of VMs, their associated storage and contextualization, a wizard-like builder that guides them through the selection of the virtual appliances, virtual organisation, resource provider, and the final customisation of the VMs that will be deployed. Its tight integration with the AppDB Cloud Marketplace allows for an automatic discovery of the appliances which are supported at each resource provider Once a topology has been created, VMOps allows management actions to be applied both on the set of VMs comprising a topology and on fine-grained actions on each individual VM.

- The EGI Notebooks, build on top of JupyterHub offers an open-source web application where users can create and share documents that contain live code, equations, visualization and explanatory text.

[1] Valeria Ardizzone, Roberto Barbera, Antonio Calanducci, Marco Fargetta, E. Ingrà, Ivan Porro, Giuseppe La Rocca, Salvatore Monforte, R. Ricceri, Riccardo Rotondo, Diego Scardaci, Andrea Schenone: The DECIDE Science Gateway. Journal of Grid Computing 10(4): 689-707 (2012)

Available resources

Current available resources grouped by categories:

- Cloud Resources:

- 246 vCPU cores

- 781 GB of RAM

- 2.1TB of object storage

- High-Throughtput Resources:

- 9.5Million HEPSPEC

- 1.4 TB of disk storage

For more details about the resources allocated for supporting this service, please click here.

Operational Level Agreements

- EC3: https://documents.egi.eu/document/3370

- FGSG: https://documents.egi.eu/document/2782

- WS-PGRADE/gUSE: https://documents.egi.eu/document/3368

- Cloud resource providers: https://documents.egi.eu/document/2773

GGUS Support Units (SUs)

- FutureGateway Science Gateway (FGSG):

- The FGSG GGUS SU is available under the Core Services category

- Elastic Cloud Computing Cluster (EC3):

- The EC3 GGUS SU is available under the Core Services category

- WS-PGRADE/gUSE portal:

- The WS-PGRADE/gUSE GGUS SU is available under the Core Services category

- The EGI Notebooks:

- The EGI Notebooks GGUS SU is available under the EGI Notebooks category

- The EGI VMOps dashboard:

- The AppDB GGUS SU is available under the Core Services category

Links for administrators

User approval:

- VO membership management interface in PERUN: https://perun.metacentrum.cz/cert/gui/

- To register in the VO (relevant for Science Gateways robot certificates and for support staff): https://perun.metacentrum.cz/cert/registrar/?vo=vo.access.egi.eu

Monitoring

- The EGI Applications on Demand service components have been registered in the GOCDB and connected with the EGI monitoring system based on ARGO.

- By default ARGO automatically gathered the services endpoints from the GOCDB and implements simple 'https' checks using standard NAGIOS probes. If necessary new ones can be easily developed and added.

- The following service components are monitored by ARGO:

- EGI-FGSG,

- EGI-NOTEBOOKS,

- GRIDOPS-WS-PGRADE,

- GRIDOPS-APPDB, and

- GRIDOPS-EC3

- To monitor the service components, check the following ARGO report page.

Accounting

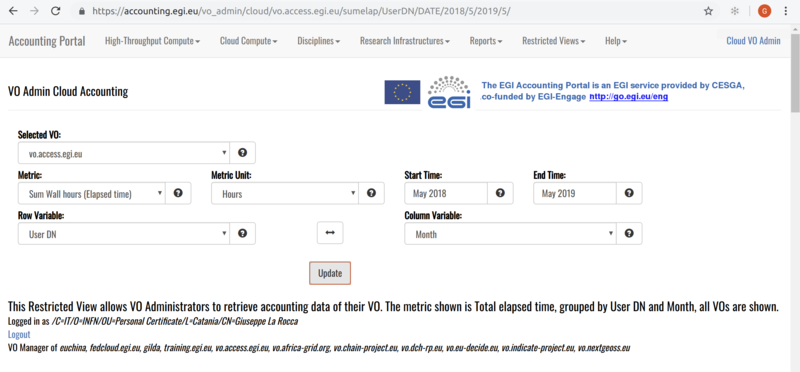

- Accounting data about the VO users can be checked here: https://accounting.egi.eu/

- From the EGI Accounting Portal it is possible to check the accounting metrics generated for both grid- and cloud-based resources supporting the vo.access.egi.eu VO.

- From the top-menu click on 'Restrict View' and 'VO Admin' to check the accounting data of platform users.

Policies & documents

- Acceptable Use Policy (AUP) and Conditions of Use of the 'EGI Applications on Demand (AoD) service'

- EGI Applications on Demand (AoD) service Security Policy

- Doc vetting manual for the EGI Support team

Useful Links

- VO ID card: http://operations-portal.egi.eu/vo/view/voname/vo.access.egi.eu

- Name:

vo.access.egi.eu - Scope: Global

- Disciplines: Support Activities

- VOMS servers: voms1/voms2.grid.cesnet.cz

- VO Membership Management: https://perun.metacentrum.cz/perun-registrar-cert/?vo=vo.access.egi.eu

- Contacts:

- EGI Support Team: applications-platform-support@mailman.egi.eu

- Managers:

- Giuseppe La Rocca (<giuseppe.larocca@egi.eu>)

- Diego Scardaci (<diego.scardaci@egi.eu>)

- Gergely Sipos (<gergely.sipos@egi.eu>)

Roadmap

Integration of the JupyterHub as a Service (mid 2018)Upgrade of the CSG to Liferay 7 to use the Future Gateway (FG) API server developed in the context of the INDIGO-DataCloud project (2019)Integration the open-source serverless Computing Platform for Data-Processing ApplicationsImprove the user's experience in the EC3 portal (mid 2018)Integrate the HNSciCloud voucher schemes in the EC3 portal (2018)Joined the IN2P3-IRES cloud provider in the vo.access.egi.eu VO (2019)Configure no.1 instance of PROMINENCE service for the vo.access.egi.eu VO (2019)Create an Ansible receipt to run big data workflows with ECAS/Ophidia framework in EGI (2019)Agree OLAs with additional cloud providers of the EGI Federation (2019)